Data Quality in Machine Learning

Pickl AI

JULY 24, 2024

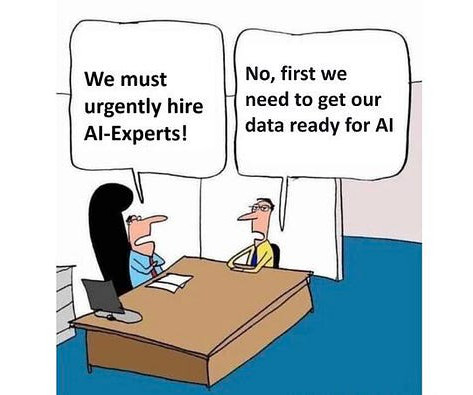

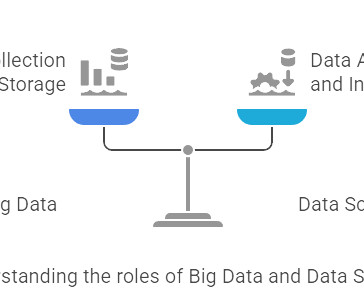

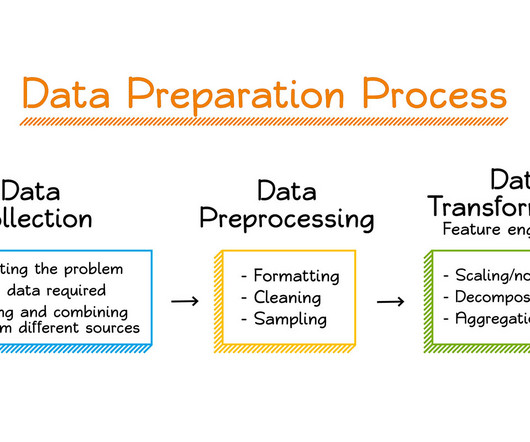

Summary: Data quality is a fundamental aspect of Machine Learning. Poor-quality data leads to biased and unreliable models, while high-quality data enables accurate predictions and insights. What is Data Quality in Machine Learning? Bias in data can result in unfair and discriminatory outcomes.

Let's personalize your content