Deploying Large NLP Models: Infrastructure Cost Optimization

The MLOps Blog

MARCH 23, 2023

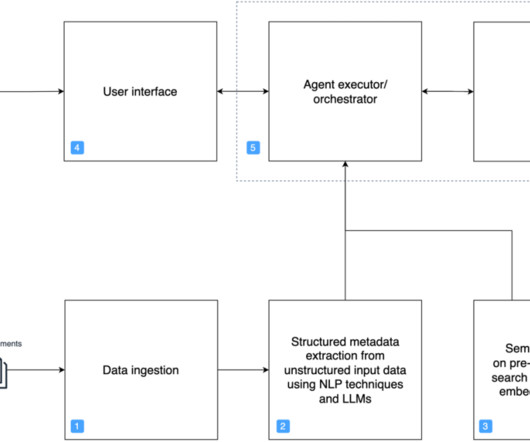

Such scenarios inevitably lead to stacking new layers of neural connections, making it a large model, moreover, deploying these models will require fast and expensive GPU, which will ultimately add to the infrastructure cost. This is especially true when the model is used for real-time applications, such as chatbots or virtual assistants.

Let's personalize your content