Writing Robust Tests for Data & Machine Learning Pipelines

Eugene Yan

SEPTEMBER 3, 2022

Or why I should write fewer integration tests.

This site uses cookies to improve your experience. By viewing our content, you are accepting the use of cookies. To help us insure we adhere to various privacy regulations, please select your country/region of residence. If you do not select a country we will assume you are from the United States. View our privacy policy and terms of use.

writing testing-pipelines

writing testing-pipelines

Eugene Yan

SEPTEMBER 3, 2022

Or why I should write fewer integration tests.

Data Science Dojo

APRIL 3, 2023

Gone are the days of manually coding every step of the process – now, with drag-and-drop interfaces, streamlining your ML pipeline has become more accessible and efficient than ever before. These tools provide a visual interface for building machine learning pipelines, making the process easier and more efficient for data scientists.

This site is protected by reCAPTCHA and the Google Privacy Policy and Terms of Service apply.

The Key to Sustainable Energy Optimization: A Data-Driven Approach for Manufacturing

From Developer Experience to Product Experience: How a Shared Focus Fuels Product Success

Understanding User Needs and Satisfying Them

Beyond the Basics of A/B Tests: Highly Innovative Experimentation Tactics You Need to Know

Towards AI

APRIL 7, 2024

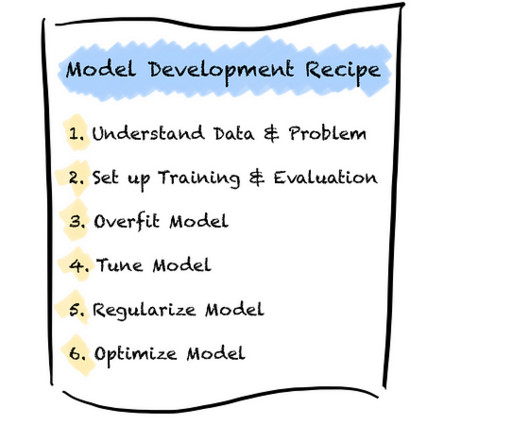

It is the data we feed it with and a reliable pipeline. Overall, we need high confidence in our pipeline, model, and understanding of the problem and data. However, we cannot test many of the above points with unit tests as in traditional software development. A good trick is to write specific functions first.

The Key to Sustainable Energy Optimization: A Data-Driven Approach for Manufacturing

From Developer Experience to Product Experience: How a Shared Focus Fuels Product Success

Understanding User Needs and Satisfying Them

Beyond the Basics of A/B Tests: Highly Innovative Experimentation Tactics You Need to Know

Towards AI

MARCH 7, 2024

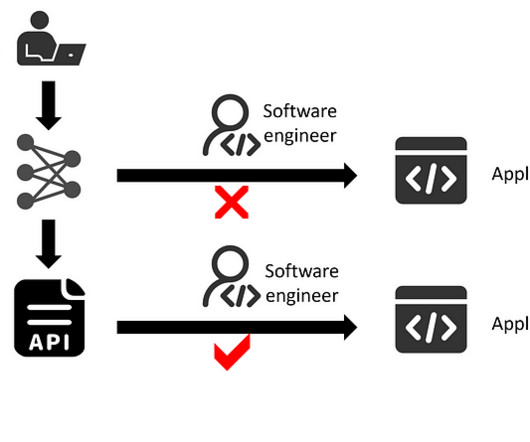

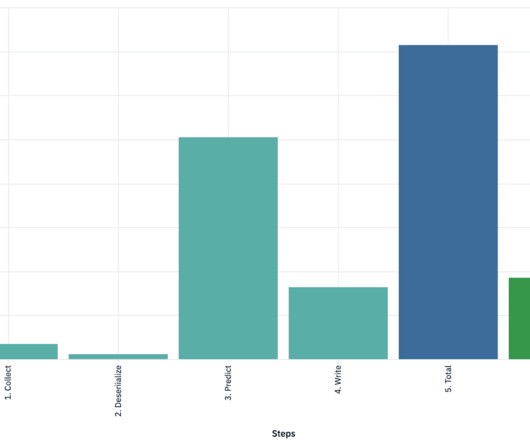

In the realm of IT application development, especially as a data scientist, it’s customary to encapsulate data processing and model inference pipelines into an API service. Integrate an AI model into an application. Source: by author. This API service essentially acts as a URL endpoint for invoking your AI model.

Dataconomy

FEBRUARY 26, 2024

This blog will provide an overview of performance testing fundamentals, identify prevalent performance bottlenecks, and offer strategies for proficiently executing these tests. What is performance testing? How can you perform performance testing for your mobile applications? Image credit ) 4.

DagsHub

SEPTEMBER 5, 2023

This is where CI/CD pipelines come into play, streamlining the process effectively. Let’s explore how the same tools that helped us in building a continuous training pipeline - Amazon SageMaker, Dagshub, and MLFlow - can help us in Deploying a model. They continuously learn and enhance their performance with additional data.

Towards AI

FEBRUARY 2, 2024

Gradio is simply a great choice for creating a customizable user interface for machine learning models to test your proof of concept. And we’re also importing the pipeline function from the Hugging Face Transformers library, which is very good for working with pre-trained transformer models in NLP.

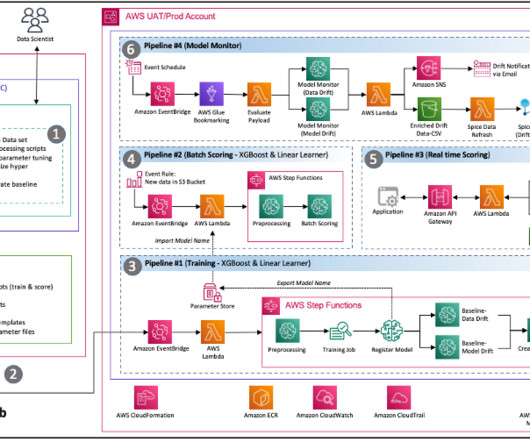

AWS Machine Learning Blog

NOVEMBER 9, 2023

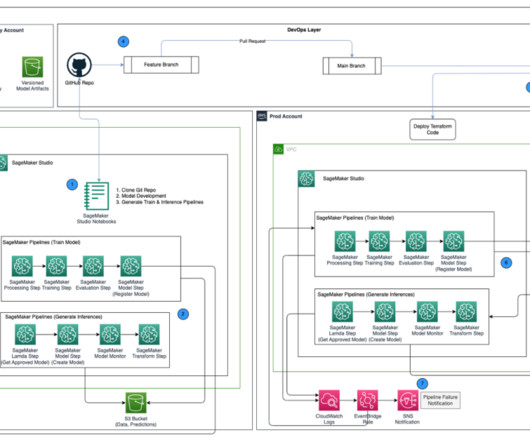

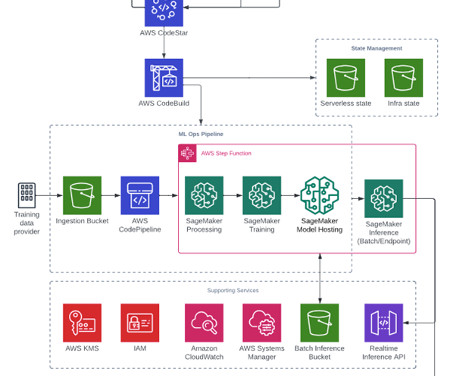

Prod environment – Where the ML pipelines from dev are promoted to as a first step, and scheduled and monitored over time. CI/CD and source control – The deployment of ML pipelines across environments is handled through CI/CD set up with Jenkins, along with version control handled through GitHub.

AWS Machine Learning Blog

JANUARY 5, 2024

There are dependencies and complexities with integrating third-party tools into the MLOps pipeline. Wipro further accelerated their ML model journey by implementing Wipro’s code accelerators and snippets to expedite feature engineering, model training, model deployment, and pipeline creation.

Towards AI

APRIL 7, 2024

This article seeks to also explain fundamental topics in data science such as EDA automation, pipelines, ROC-AUC curve (how results will be evaluated), and Principal Component Analysis in a simple way. SweetViz is an open-source Python library that generates visualizations that let you begin your EDA by writing two lines of code!

Towards AI

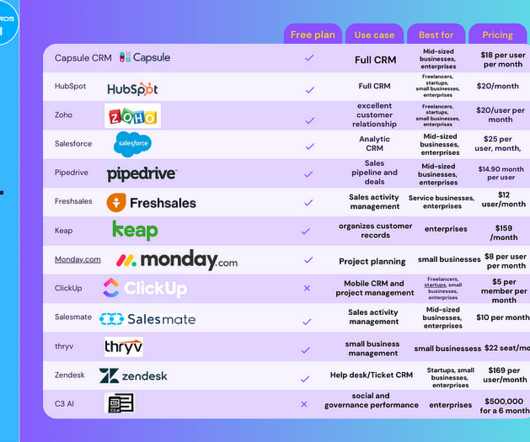

FEBRUARY 22, 2024

Predictive Sales Forecasting: To gain insights into future sales trends and pipeline health for making informed decisions. Test Before You Invest: Test the software using free trials or demos to ensure the software fits your needs perfectly. Minimal AI Features: No true AI features except basic suggestions and auto-fill.

Becoming Human

APRIL 19, 2024

This radical method has the power to completely change how software is developed, tested, and implemented. Automated Testing: By automating the creation of test cases, generative AI can expedite the software development process’ testing phase.

phData

MARCH 27, 2023

Had I read a blog like this, I would have had no problem, which is precisely why I wanted to write this blog. And even more good news, I’m going to share all my learnings so that when you take this test, you’ll have everything you need to pass the first time around.

AWS Machine Learning Blog

OCTOBER 2, 2023

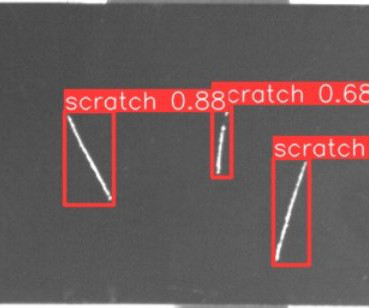

In Part 1 of this series, we drafted an architecture for an end-to-end MLOps pipeline for a visual quality inspection use case at the edge. The focus on managed and serverless services reduces the need to operate infrastructure for your pipeline and allows you to get started quickly. Labeling jobs are used to manage labeling workflows.

ODSC - Open Data Science

FEBRUARY 8, 2023

Providing an example of the company’s goal with Bard, Pichai went on to write, “ Bard can be an outlet for creativity, and a launchpad for curiosity, helping you to explain new discoveries from NASA’s James Webb Space Telescope to a 9-year-old, or learn more about the best strikers in football right now, and then get drills to build your skills. ”

AWS Machine Learning Blog

NOVEMBER 15, 2023

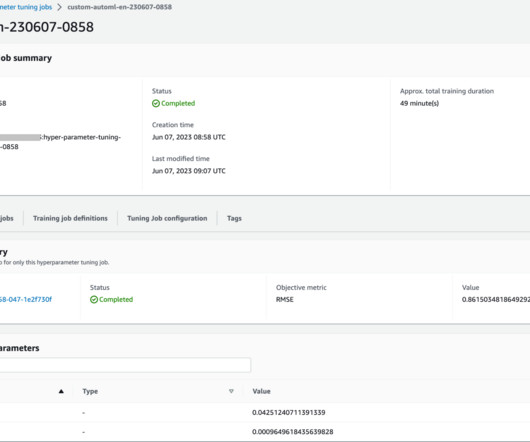

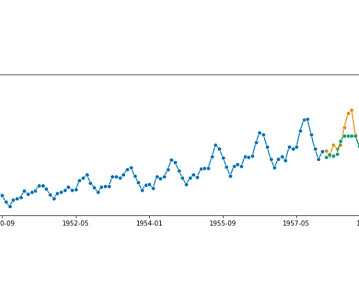

Such preprocessing techniques could be applied individually or be combined in a pipeline. The dataset is split into training and testing data frames and uploaded to the SageMaker session default S3 bucket. Training script template The AutoML workflow in this post is based on scikit-learn preprocessing pipelines and algorithms.

Iguazio

NOVEMBER 16, 2023

This includes ML experts who can develop, train and deploy models, DevOps engineers for the operational aspects, including CI/CD pipelines, monitoring, and ML infrastructure management, developers to build the platform's UI, APIs, and other software components, and data engineers for managing data pipelines, storage, and ensuring data quality.

AWS Machine Learning Blog

FEBRUARY 29, 2024

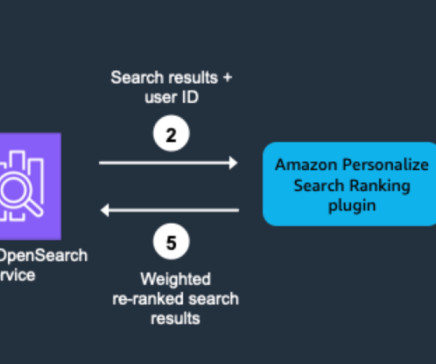

Populating the index with representative data facilitates thorough testing and validation of the plugin. Set up search pipelines to activate the plugin’s functionality. Search pipelines contain request preprocessors and response postprocessors that transform queries and results. For values, specify true or false.

Mlearning.ai

JULY 10, 2023

Everything you need to know about Kubeflow Pipelines for Machine Learning Pipelines Image by Lukas from Pixabay Kubeflow Pipelines (KFP) is a powerful tool that enables you to build, deploy, and run machine learning pipelines in a scalable and reproducible manner using Docker containers.

DagsHub

SEPTEMBER 7, 2023

Building pipelines is a one-off task, which ML practitioners can later use to train and deploy their models without any help from the MLOps team. The goal of the project is to build a custom two-stage pipeline that automates the data processing and training process. We will first create a simple image-segmentation model to automate.

PyImageSearch

APRIL 3, 2023

As an engineer, your work might include more than just running the deep learning models on a cluster equipped with high-end GPUs and achieving state-of-the-art results on the test data. blob ) as required by OAK hardware test_data : It contains a few vegetable images from the test set, which the classify_image.py

Iguazio

DECEMBER 14, 2023

Drawing from their extensive experience in the field, the authors share their strategies, methodologies, tools and best practices for designing and building a continuous, automated and scalable ML pipeline that delivers business value. Why Did the Authors Decide to Write this Book? Exploratory data analysis (EDA) and modeling.

IBM Journey to AI blog

NOVEMBER 14, 2023

Moreover, 36% of developers struggle with the collaboration between development and IT Operations, leading to inefficiencies in the development pipeline. To compound these issues, repeated surveys highlight “testing” as the primary area causing delays in project timelines. How does Wazi as Service help drive modernization?

The MLOps Blog

MARCH 15, 2023

This article is a real-life study of building a CI/CD MLOps pipeline. CI/CD pipeline: key thoughts and considerations Continuous integration and continuous deployment (CI/CD) are crucial in ML model deployments because it allows faster and more efficient model updates and enhancements. S3 buckets.

Smart Data Collective

MAY 18, 2022

A high-quality testing platform easily integrates with all the data analytics and optimization solutions that QA teams use in their work and simplifies testing process, collects all reporting and analytics in one place, can significantly improve team productivity, and speeds up the release. This is not entirely true. Data reporting.

AWS Machine Learning Blog

JUNE 16, 2023

The SambaSafety data science team used a code repository solution external to AWS; the final pipeline had to be intelligent enough to trigger based on updates to their code base, which was written primarily in R. The solution delivered by Firemind for SambaSafety’s data science team was built around two ML pipelines.

Mlearning.ai

JANUARY 3, 2024

Building a PoC RAG pipeline is not overtly complex. However, to enhance its robustness, thorough testing on a dataset that accurately mirrors the production distribution is imperative. Ground Truth or known correct response Datapoints required for evaluating RAG pipelines Evaluation Metrics Ragas Metrics A.

AWS Machine Learning Blog

MARCH 21, 2023

It contains over 300 built-in data transformation steps to aid with feature engineering, normalization, and cleansing to transform your data without having to write any code. We do this in the custom transform step because Data Wrangler doesn’t have a built-in transform for this task as of this writing. Choose Export to.

SEPTEMBER 25, 2023

Devs shouldn’t be neck-deep in evaluation pipelines just to test their software, so we solve that complexity for them. Watto securely uses this contextual data to build high quality documents/reports that employees spend quarters in writing and getting reviewed. Gleam Gleam founders Emeka Itegbe (left) Oliver Keh.

The MLOps Blog

MARCH 28, 2023

At the time of this writing, Brainly has over 300 million monthly users across the globe. The ML infrastructure team makes it easy for the AI teams to create training pipelines with internal tools that make their workflow easier. These datasets would go into the training pipelines they have already set up.

ODSC - Open Data Science

MAY 25, 2023

Build tuned auto-ML pipelines, with common interface to well-known libraries (scikit-learn, statsmodels, tsfresh, PyOD, fbprophet, and more!) We provide extension templates for all supported learning tasks to enable you to write your own components Option 1: you want an estimator in sktime? Annotation? Something else?

O'Reilly Media

MARCH 26, 2024

In Borges’ fable Pierre Menard, Author of The Quixote , the eponymous Monsieur Menard plans to sit down and write a portion of Cervantes’ Don Quixote. Not to transcribe, but re-write the epic novel word for word: His goal was never the mechanical transcription of the original; he had no intention of copying it. joined Flickr.

ODSC - Open Data Science

DECEMBER 19, 2023

These professionals are responsible for creating and maintaining prompts for AI models, redlining, and finetuning models through tests and prompt work. They use their knowledge of data warehousing, data lakes, and big data technologies to build and maintain data pipelines. Prompt Engineer Prompt engineers are in the wild west of AI.

Smart Data Collective

MARCH 30, 2022

In it, first and foremost, all Gen1 writes need to be halted. When all the data has been transferred, stop all writes to Gen1 and redirect all workloads to Gen2. Dual pipeline pattern. In this pattern, you start migrating data from Gen1 to Gen2 (Azure Data Factory is highly recommended for dual pipeline migration).

AWS Machine Learning Blog

AUGUST 14, 2023

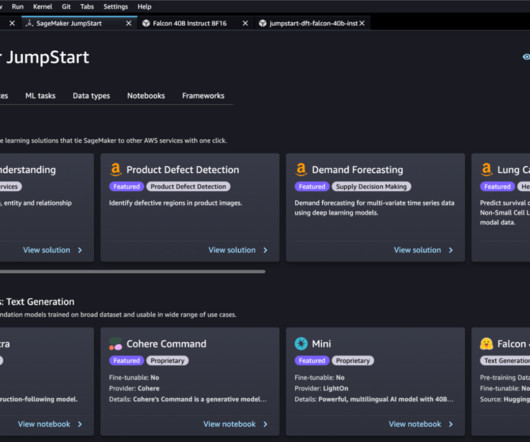

In this post, we showcase how to build an end-to-end generative AI application for enterprise search with Retrieval Augmented Generation (RAG) by using Haystack pipelines and the Falcon-40b-instruct model from Amazon SageMaker JumpStart and Amazon OpenSearch Service. Initialize DocumentStore and index documents.

AWS Machine Learning Blog

FEBRUARY 13, 2024

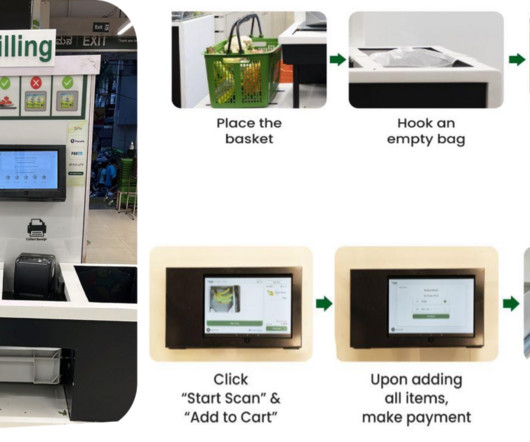

Split data into train, validation, and test sets. BigBasket used SageMaker notebooks to train their ML models and were able to easily port their existing open source PyTorch and other open source dependencies to a SageMaker PyTorch container and run the pipeline seamlessly. Their starting training data size was over 1.5

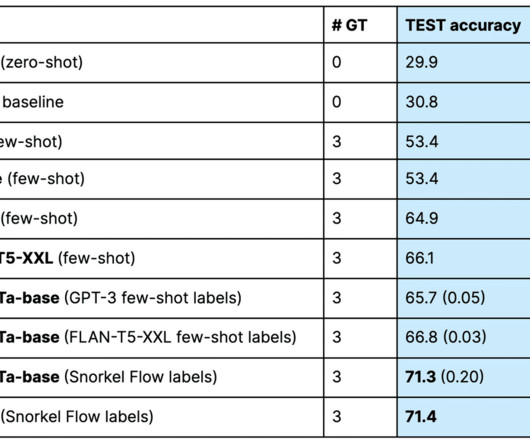

Snorkel AI

SEPTEMBER 20, 2023

At the time of this writing, ChatGPT warned users that its pre-training data contains no information after September 2021. The model learns the gaps between what it currently produces and what the training pipeline expected and adjusts its “attention” to specific features and patterns. Out-of-date information.

IBM Journey to AI blog

NOVEMBER 24, 2023

Subsequent phases are build and test and deploy to production. Further, for re-write initiatives, one needs to map functional capabilities to legacy application context so as to perform effective domain-driven design/decomposition exercises. Let us explore the Generative AI possibilities across these lifecycle areas.

phData

MARCH 22, 2023

Transitioning work to Snowpark allows for faster ML deployment, easier scaling, and robust data pipeline development. Complex Transformations Data engineers can maintain all of their complex transformation pipelines as code. Leveraging test-driven development and CI/CD best-practices as well as open source libraries.

IBM Data Science in Practice

MARCH 8, 2023

Source: IBM Cloud Pak for Data MLOps teams often struggle when it comes to integrating into CI/CD pipelines. For MLOps teams, the core challenges lie in figuring out how to test and govern data. A feature platform should automatically process the data pipelines to calculate that feature. Spark, Flink, etc.)

IBM Journey to AI blog

SEPTEMBER 5, 2023

Empowering teams to use a standard pipeline based on Git to orchestrate the development and deployment of an application unleashes productivity. Wazi is a family of tools for delivering a cloud-native DX for z/OS and providing for cloud-native development and testing for z/OS in the IBM Cloud. No AI was used to write this article.

AWS Machine Learning Blog

JUNE 15, 2023

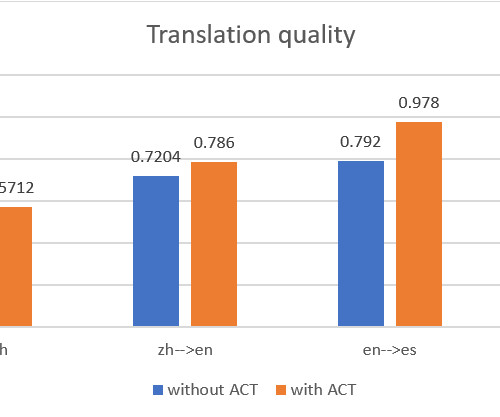

used to address this challenge by using the Active Custom Translation (ACT) feature of Amazon Translate and building a multilingual automatic translation pipeline. We also recommend best practices when using Amazon Translate in this automatic translation pipeline to ensure translation quality and efficiency.

Mlearning.ai

MAY 27, 2023

Data scientists can create less complicated code to understand, debug, and maintain if they adhere to coding standards, write modular and reusable routines, and include error-handling techniques. The need to test code to verify its correctness and durability is emphasized in software engineering.

Data Science Dojo

AUGUST 24, 2023

Testing and monitoring : MLOps and DevOps emphasize the importance of testing and monitoring to ensure consistent and reliable results. In MLOps, this involves testing and monitoring the accuracy and performance of ML models over time. Managing training pipelines and workflows for a more efficient and streamlined process.

Expert insights. Personalized for you.

We have resent the email to

Are you sure you want to cancel your subscriptions?

Let's personalize your content