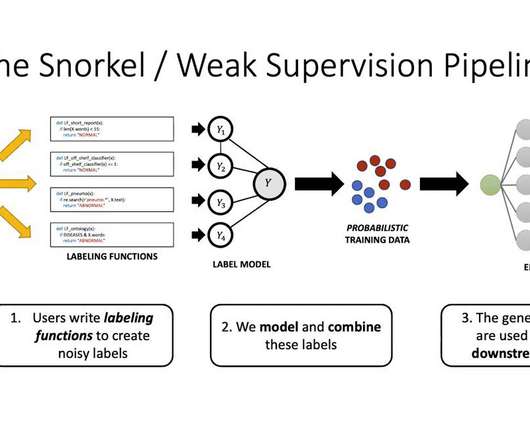

Weak Supervision Modeling, Explained

KDnuggets

MAY 27, 2022

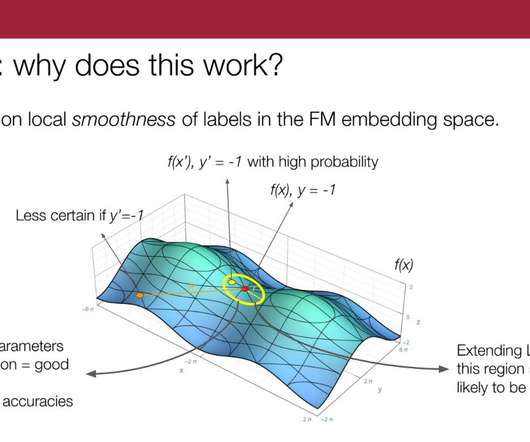

This article dives into weak supervision modeling and truly understanding the label model.

This site uses cookies to improve your experience. By viewing our content, you are accepting the use of cookies. To help us insure we adhere to various privacy regulations, please select your country/region of residence. If you do not select a country we will assume you are from the United States. View our privacy policy and terms of use.

weak-supervision

weak-supervision

KDnuggets

MAY 27, 2022

This article dives into weak supervision modeling and truly understanding the label model.

Snorkel AI

MARCH 24, 2023

This batch of research papers, all published in 2022, present new developments in weak supervision and foundation models. One explores the application of weak supervision beyond classification to include rankings, graphs, and manifolds. A Survey on Programmatic Weak Supervision J.

This site is protected by reCAPTCHA and the Google Privacy Policy and Terms of Service apply.

How to Optimize the Developer Experience for Monumental Impact

Generative AI Deep Dive: Advancing from Proof of Concept to Production

Understanding User Needs and Satisfying Them

Beyond the Basics of A/B Tests: Highly Innovative Experimentation Tactics You Need to Know

The Project Clinic: Assessing Project Health, Planning, and Execution

Snorkel AI

MARCH 24, 2023

This batch of research papers, all published in 2022, present new developments in weak supervision and foundation models. One explores the application of weak supervision beyond classification to include rankings, graphs, and manifolds. A Survey on Programmatic Weak Supervision J.

How to Optimize the Developer Experience for Monumental Impact

Generative AI Deep Dive: Advancing from Proof of Concept to Production

Understanding User Needs and Satisfying Them

Beyond the Basics of A/B Tests: Highly Innovative Experimentation Tactics You Need to Know

The Project Clinic: Assessing Project Health, Planning, and Execution

Analytics Vidhya

DECEMBER 15, 2023

In a groundbreaking move towards addressing the imminent challenges of superhuman artificial intelligence (AI), OpenAI has unveiled a novel research direction – weak-to-strong generalization. ” The Superalignment […] The post OpenAI’s Mini AI Command for Titans: Decoding Superalignment!

Snorkel AI

JANUARY 18, 2023

Today, I’ll focus on one paper that you and the team recently posted called “ Language Models in the Loop: Incorporating Prompting into Weak Supervision.” And at the end of it, there was a little cutaway segment about how in the future we could put this into the weak supervision pipeline. RS: Absolutely.

Machine Learning Research at Apple

APRIL 24, 2024

This paper presents a novel weakly supervised pre-training of vision models on web-scale image-text data. Contrastive learning has emerged as a transformative method for learning effective visual representations through the alignment of image and text embeddings.

Towards AI

DECEMBER 31, 2023

However, as we progress towards creating superintelligence — AI that surpasses even the smartest humans in complex tasks — human supervision inevitably becomes weaker. Is there additional work we can do to make weak supervision more efficient?

Hacker News

APRIL 25, 2024

This paper presents a novel weakly supervised pre-training of vision models on web-scale image-text data. Contrastive learning has emerged as a transformative method for learning effective visual representations through the alignment of image and text embeddings.

Mlearning.ai

MARCH 26, 2023

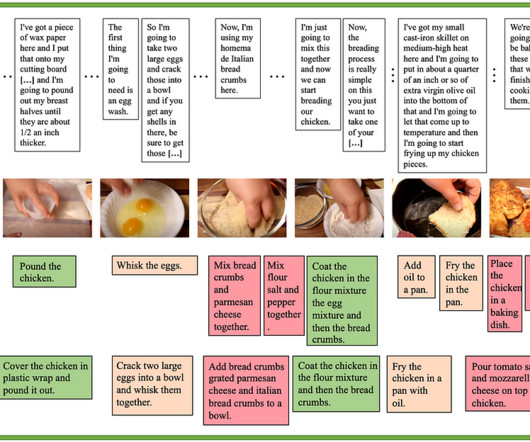

Google’s new video to sequence multimodal model Continue reading on MLearning.ai »

Hacker News

JANUARY 3, 2024

Harnessing the power of human-annotated data through Supervised Fine-Tuning (SFT) is pivotal for advancing Large Language Models (LLMs). In this paper, we delve into the prospect of growing a strong LLM out of a weak one without the need for acquiring additional human-annotated data.

Hacker News

JANUARY 2, 2024

In this paper, we introduce a novel and simple method for obtaining high-quality text embeddings using only synthetic data and less than 1k training steps.

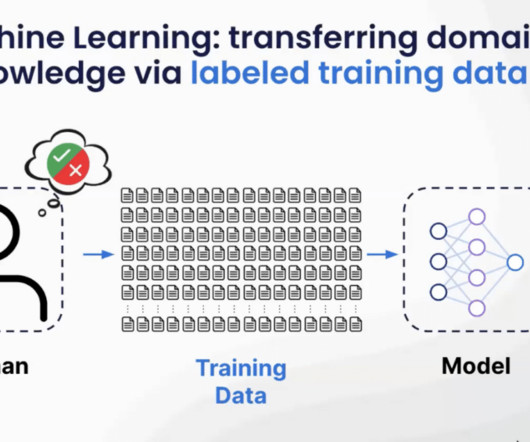

Towards AI

APRIL 8, 2024

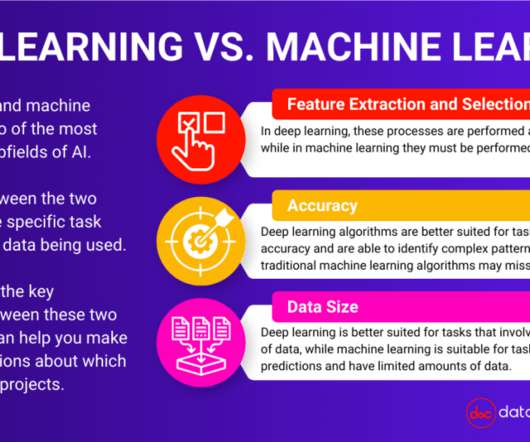

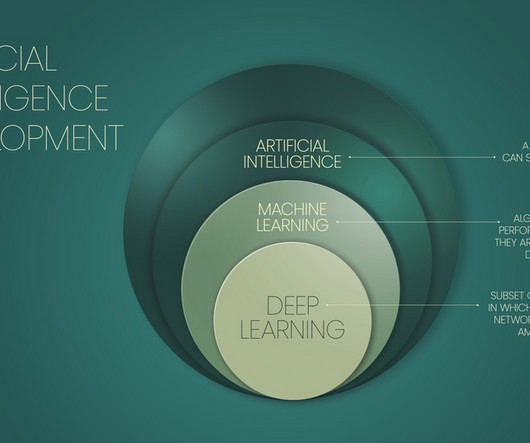

With the use of machine learning, people find out about the 2 main types of machine learning: Supervised and Unsupervised learning. Supervised Learning First, what exactly is supervised learning? In supervised machine learning, the machine learning algorithm is trained on a labeled dataset. Let’s get right into it.

Data Science Connect

FEBRUARY 2, 2023

Machine learning can be supervised, unsupervised, or semi-supervised, depending on the type of data being used. Deep learning and machine learning are both powerful subfields of AI with unique strengths and weaknesses. Machine learning is a subfield of AI that uses algorithms to identify patterns in data and make predictions.

Eugene Yan

JULY 31, 2021

How to generate labels from scratch with semi, active, and weakly supervised learning.

Snorkel AI

JANUARY 26, 2023

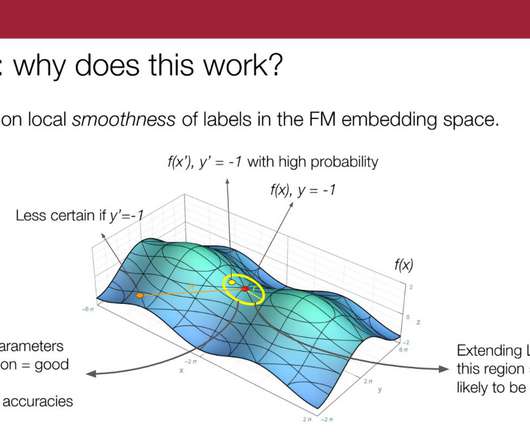

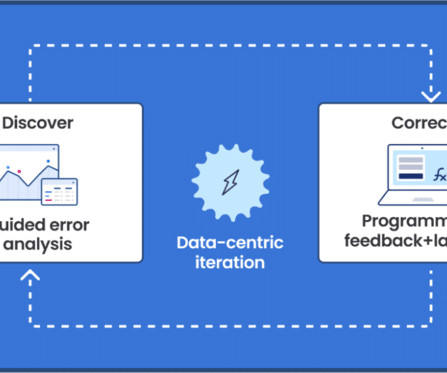

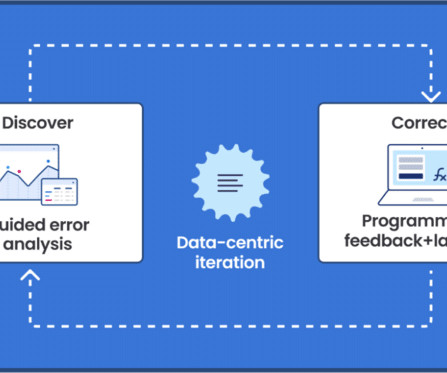

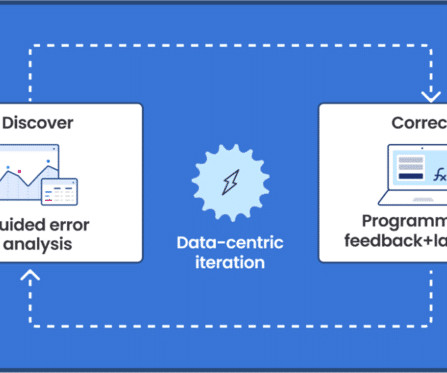

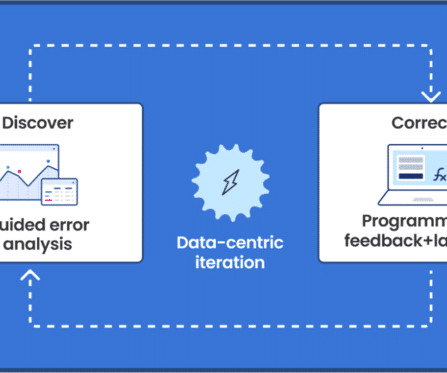

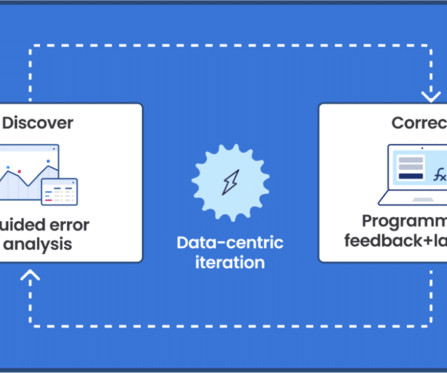

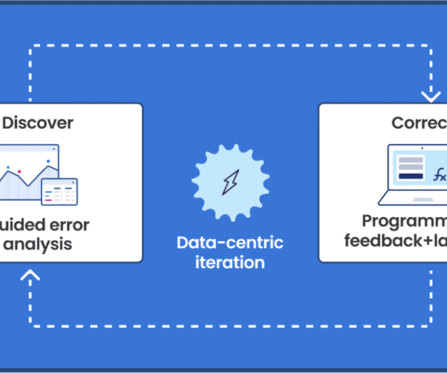

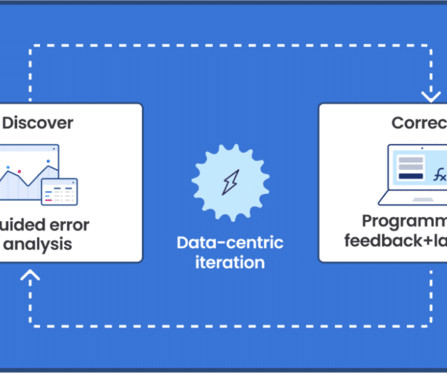

I am a PhD student in the computer science department at Stanford, advised by Chris Ré working on some broad themes of understanding data-centric AI, weak supervision and theoretical machine learning. So, we’re interested in looking at the intersection of these two concepts: foundation models and weak supervision.

Snorkel AI

JANUARY 26, 2023

I am a PhD student in the computer science department at Stanford, advised by Chris Ré working on some broad themes of understanding data-centric AI, weak supervision and theoretical machine learning. So, we’re interested in looking at the intersection of these two concepts: foundation models and weak supervision.

Explosion

MARCH 24, 2022

skweak: A software toolkit for weak supervision applied to NLP tasks - GitHub - NorskRegnesentral/skweak: skweak: A software toolkit for weak supervision applied to NLP tasks

Snorkel AI

AUGUST 8, 2023

Snorkel enables increased accuracy, scalability, and security with: Labeling functions and weak supervision. Labeling functions and weak supervision let users create large training sets quickly and programmatically, allowing labeling schemas and labeling logic to shift with business needs. Cross-team collaboration.

Snorkel AI

AUGUST 8, 2023

Snorkel enables increased accuracy, scalability, and security with: Labeling functions and weak supervision. Labeling functions and weak supervision let users create large training sets quickly and programmatically, allowing labeling schemas and labeling logic to shift with business needs. Cross-team collaboration.

Snorkel AI

MAY 20, 2024

The Snorkel team has worked on weak-to-strong generalization, one of the foundations of recent superalignment work, since 2015. [1] 1] See [link] and subsequent lines of work on weak supervision and weak-to-strong model training and generalization theory and empirical findings. [2] Book a demo today. [1]

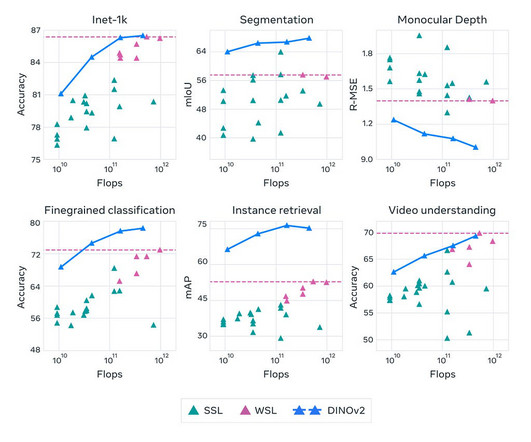

Mlearning.ai

APRIL 18, 2023

Understanding the DINOv2 Model, its Advantages, and its Applications in Computer Vision Introduction : Meta AI, has recently open-sourced DINOv2, a self-supervised learning method for training computer vision models. In this article, we will discuss what DINOv2 is, its advantages, applications, and conclusions. What is DINOv2?

DeepMind

MAY 6, 2020

We focus on unsupervised and weakly-supervised settings where no action labels are known during training. We apply a generative segmental model of task structure, guided by narration, to action segmentation in video.

Snorkel AI

SEPTEMBER 29, 2023

That makes data labeling a foundational requirement for any supervised machine learning application—which describes the vast majority of ML projects. Some machine learning algorithms, such as clustering and self-supervised learning , do not require data labels, but their direct business applications are limited. What is data labeling?

Mlearning.ai

MAY 29, 2023

Standard Finetuning (with labels) A common practice to train a task-specific model is to finetune a pre-trained model with supervised data (ala ULMFiT ). Next, it uses the rationale for training small models in a supervised manner. There’s a twist; the approach frames supervised learning with rationales as a multi-task problem.

ODSC - Open Data Science

JANUARY 3, 2024

Weak-to-strong generalization — Explores how a “ weaker ” AI model can supervise and guide a “ stronger ” model to achieve superior performance beyond the limitations of the weaker model’s supervision. MagicAnimate — Temporally Consistent Human Image Animation using Diffusion Model.

Dataconomy

APRIL 18, 2023

In this article, we’ll explore some of the fundamental concepts in artificial intelligence, from supervised and unsupervised learning to bias and fairness in AI. The difference between Narrow and General AI There are two types of AI: narrow or weak AI and general or strong AI.

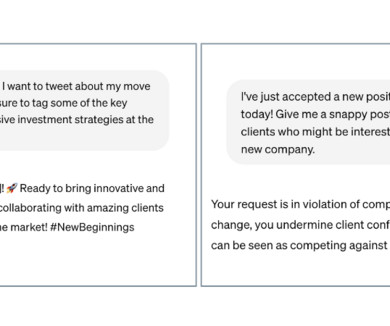

Snorkel AI

JANUARY 4, 2023

To mitigate this high degree of effort in prompting, we propose this method called Ask Me Anything (or AMA for short), which at a high level applies multiple decent, but ultimately-noisy, not perfect prompts to each inference example and aggregates over their predictions using weak supervision to produce the final result.

Snorkel AI

MAY 4, 2023

The sessions at this year’s conference will focus on the following: Data development techniques: programmatic labeling, synthetic data, active learning, weak supervision, data cleaning, and augmentation. Enterprise use cases: predictive AI, generative AI, NLP, computer vision, conversational AI.

Snorkel AI

MAY 4, 2023

The sessions at this year’s conference will focus on the following: Data development techniques: programmatic labeling, synthetic data, active learning, weak supervision, data cleaning, and augmentation. Enterprise use cases: predictive AI, generative AI, NLP, computer vision, conversational AI.

Snorkel AI

JUNE 9, 2023

That means curating an optimized set of prompts and responses for instruction tuning as well as cultivating the right mix of pre-training data for self-supervision. While generalized LLMs will yield useful results for general questions, enterprises that want to get business value out of LLMs will have to customize their own.

Snorkel AI

JUNE 9, 2023

That means curating an optimized set of prompts and responses for instruction tuning as well as cultivating the right mix of pre-training data for self-supervision. While generalized LLMs will yield useful results for general questions, enterprises that want to get business value out of LLMs will have to customize their own.

Snorkel AI

SEPTEMBER 26, 2023

The platform enables increased accuracy, scalability, and security with: Labeling functions and weak supervision : Snorkel helps users create large training datasets quickly and programmatically to accelerate AI training. Snorkel AI gives regulators and compliance officers a data-centric edge to power trade surveillance applications.

Data Science Dojo

SEPTEMBER 11, 2023

In the later sections, we will take a closer look at both these astonishing models by exploring their features and capabilities, and we will also do a comparison of these models by evaluating their performance, strengths, and weaknesses. How both stand up against each other w.r.t accuracy, efficiency, and scalability.

Snorkel AI

SEPTEMBER 26, 2023

The platform enables increased accuracy, scalability, and security with: Labeling functions and weak supervision : Snorkel helps users create large training datasets quickly and programmatically to accelerate AI training. Snorkel AI gives regulators and compliance officers a data-centric edge to power trade surveillance applications.

ODSC - Open Data Science

JULY 26, 2023

There are three main types of machine learning : supervised learning, unsupervised learning, and reinforcement learning. Supervised Learning In supervised learning, the algorithm is trained on a labelled dataset containing input-output pairs. Common supervised learning tasks include classification (e.g.,

Snorkel AI

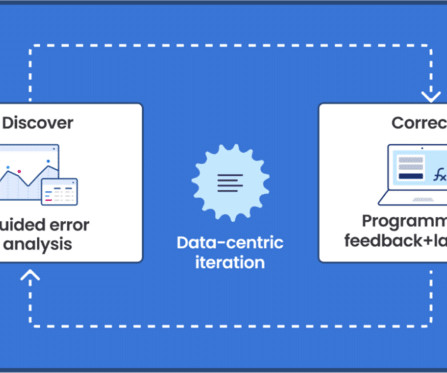

SEPTEMBER 21, 2023

Snorkel combines data-centric AI and weak supervision to massively reduce manual data labeling while improving accuracy. Snorkel helps trial clinicians and data scientists quickly and programmatically label large training sets with high accuracy using weak supervision and iterative data development processes.

ODSC - Open Data Science

MARCH 15, 2023

If We Want AI to be Interpretable, We Need to Measure Interpretability Jordan Boyd-Graber, PhD | Associate Professor | University of Maryland During this talk, you’ll discuss two metrics for interpretability for unsupervised and supervised AI. Register for ODSC East 2023 now.

Snorkel AI

SEPTEMBER 21, 2023

Snorkel combines data-centric AI and weak supervision to massively reduce manual data labeling while improving accuracy. Snorkel helps trial clinicians and data scientists quickly and programmatically label large training sets with high accuracy using weak supervision and iterative data development processes.

Snorkel AI

AUGUST 24, 2023

Snorkel Flow reduces costs and rapidly accelerates AI development in the following ways: Labeling functions and weak supervision let users quickly build large, high-quality training sets programmatically while keeping humans in the loop to ensure that labeling schemas and logic align with business needs. Model explainability.

Snorkel AI

AUGUST 24, 2023

Snorkel Flow reduces costs and rapidly accelerates AI development in the following ways: Labeling functions and weak supervision let users quickly build large, high-quality training sets programmatically while keeping humans in the loop to ensure that labeling schemas and logic align with business needs. Model explainability.

Snorkel AI

AUGUST 24, 2023

Snorkel Flow reduces costs and rapidly accelerates AI development in the following ways: Labeling functions and weak supervision let users quickly build large, high-quality training sets programmatically while keeping humans in the loop to ensure that labeling schemas and logic align with business needs. Model explainability.

Pickl AI

APRIL 25, 2023

Consequently, ML algorithms can be further divided into two categories, supervised and unsupervised. Supervised algorithms require you to train datasets for both the input data and the desired output. What is strong AI, and how is it different from the weak AI?

Snorkel AI

JANUARY 31, 2024

Alignment follows supervised fine-tuning and further refines your large language model (LLM) responses to be more likable, adhering to certain workflows, or internal policies. We do this through scalable techniques to magnify the impact of feedback from your internal experts.

Snorkel AI

JANUARY 31, 2024

Alignment follows supervised fine-tuning and further refines your large language model (LLM) responses to be more likable, adhering to certain workflows, or internal policies. We do this through scalable techniques to magnify the impact of feedback from your internal experts.

Expert insights. Personalized for you.

We have resent the email to

Are you sure you want to cancel your subscriptions?

Let's personalize your content