Coding Self-Attention, Multi-Head Attention, Cross-Attention, Causal-Attention

Hacker News

JANUARY 14, 2024

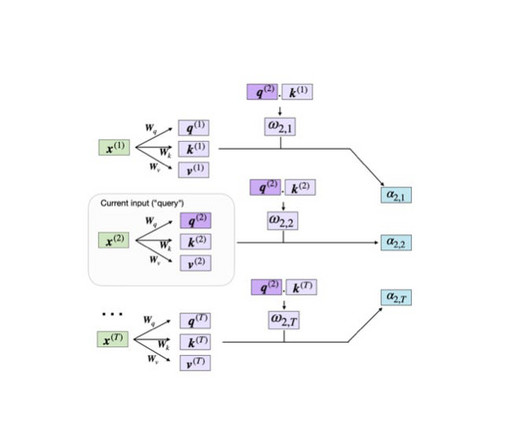

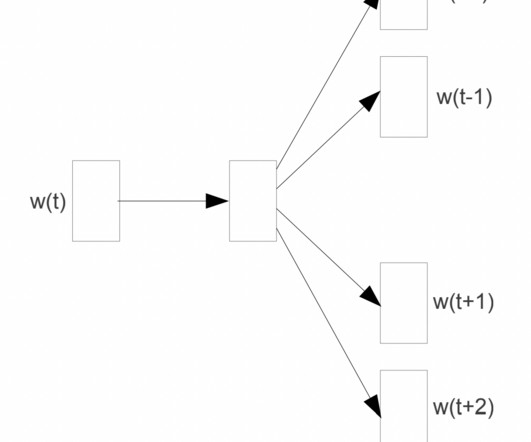

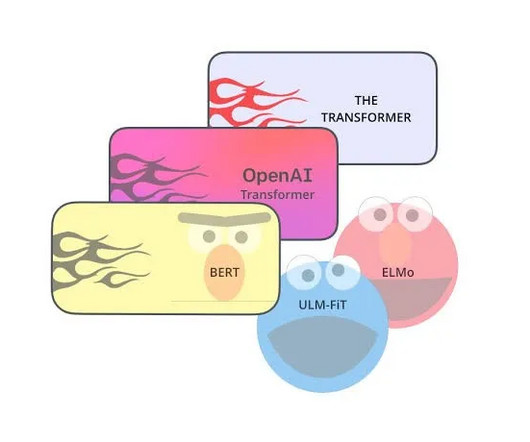

This article will teach you about self-attention mechanisms used in transformer architectures and large language models (LLMs) such as GPT-4 and Llama. Self-attention and related mechanisms are core components of LLMs, making them a useful topic to understand when working with these models.

Let's personalize your content