Language Modeling From Scratch — Deep Dive Into Activations, Gradients and BatchNormalization (Part 3)

Towards AI

FEBRUARY 22, 2024

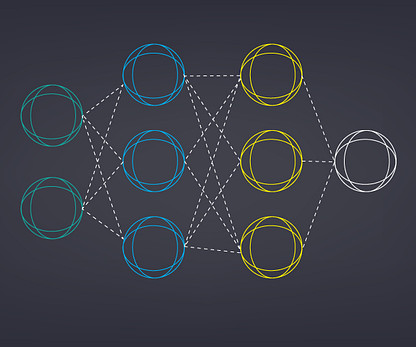

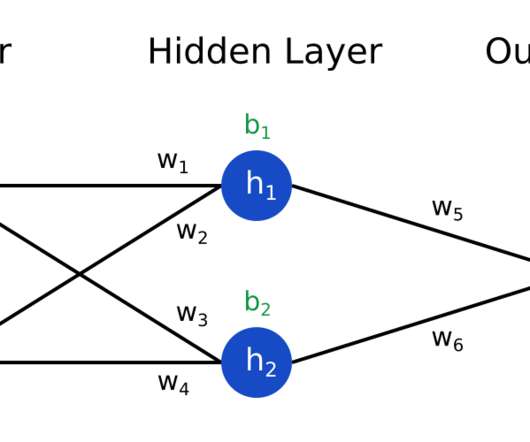

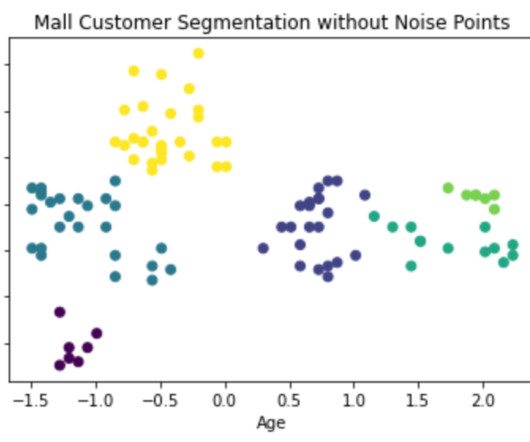

This is a separate article in itself because it’s important to develop a solid understanding of these topics before we mode onto more complex architectures like RNN and Transformers. In this article we’ll take a deeper look into activations, gradients and batch normalization. I highly recommend reading the previous articles of the series.

Let's personalize your content