The Genius of Mixtral of Experts by Mistral AI

Data Science Dojo

FEBRUARY 9, 2024

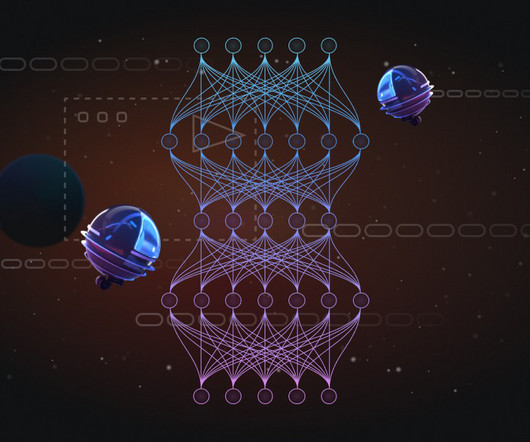

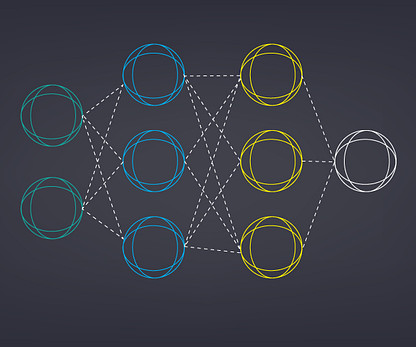

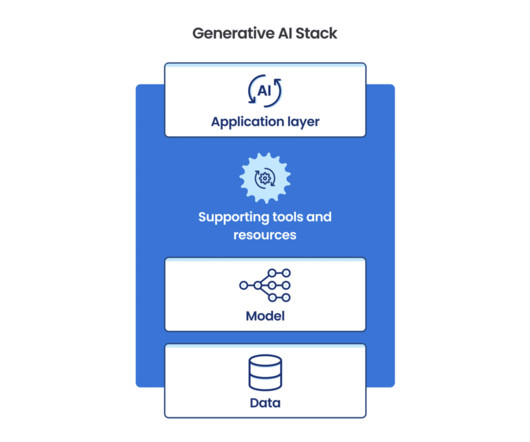

The race of big tech and startups to create the top language model has us eager to see how things change. Distinctive for its use of the Sparse Mixture of Experts (SMoE) technique, Mixtral amalgamates the expertise of various specialized models. How does Mixtral work; What is so unique in its framework?

Let's personalize your content