Groq sparks LPU vs GPU face-off

Dataconomy

FEBRUARY 26, 2024

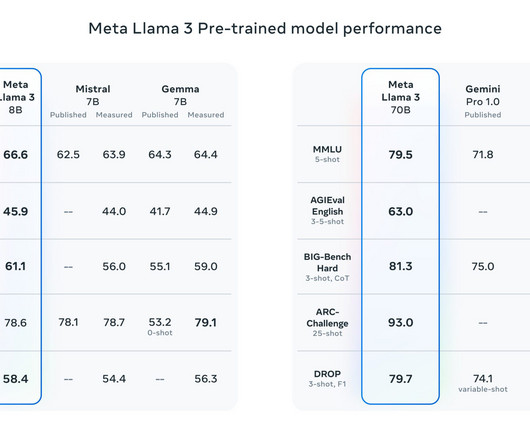

This week, Groq’s LPU astounded the tech community by executing open-source Large Language Models (LLMs) like Llama-2, which boasts 70 billion parameters, at an impressive rate of over 100 tokens per second. Groq adopted an innovative strategy from its inception, prioritizing software and compiler innovation before hardware development.

Let's personalize your content