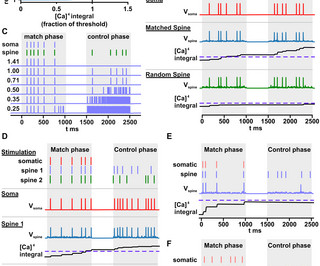

Short-term Hebbian learning can implement transformer-like attention

Hacker News

MARCH 3, 2024

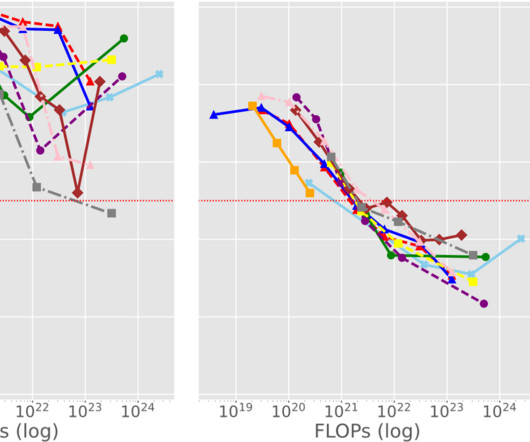

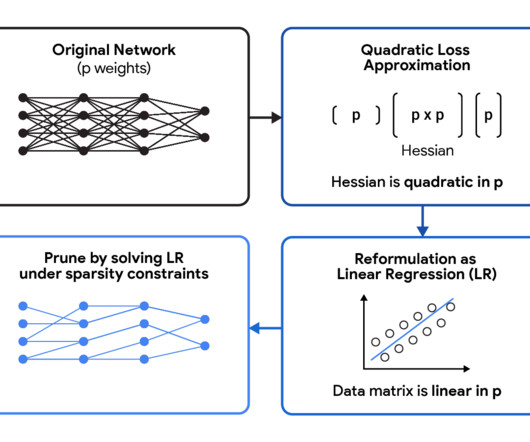

Transformers are built from so-called attention layers which perform large numbers of comparisons between the vector outputs of the previous layers, allowing information to flow through the network in a more dynamic way than previous designs. This large number of comparisons is computationally expensive and has no known analogue in the brain.

Let's personalize your content