LoRA Learns Less and Forgets Less

Hacker News

MAY 17, 2024

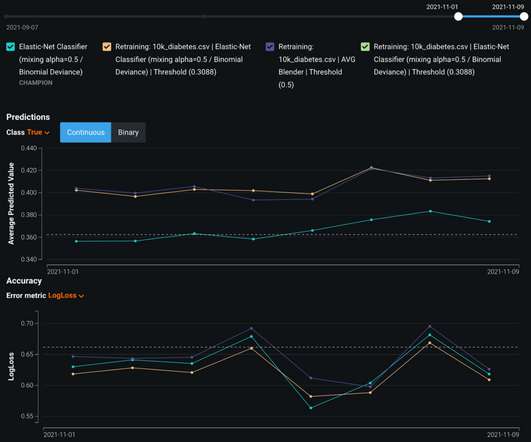

Our results show that, in most settings, LoRA substantially underperforms full finetuning. We show that LoRA provides stronger regularization compared to common techniques such as weight decay and dropout; it also helps maintain more diverse generations. We conclude by proposing best practices for finetuning with LoRA.

Let's personalize your content