Building a Scalable ETL with SQL + Python

KDnuggets

APRIL 21, 2022

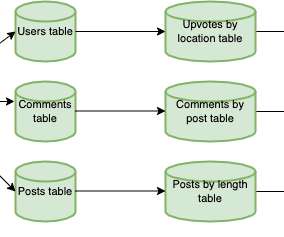

This post will look at building a modular ETL pipeline that transforms data with SQL and visualizes it with Python and R.

This site uses cookies to improve your experience. By viewing our content, you are accepting the use of cookies. To help us insure we adhere to various privacy regulations, please select your country/region of residence. If you do not select a country we will assume you are from the United States. View our privacy policy and terms of use.

KDnuggets

APRIL 21, 2022

This post will look at building a modular ETL pipeline that transforms data with SQL and visualizes it with Python and R.

KDnuggets

APRIL 27, 2022

A Brief Introduction to Papers With Code; Machine Learning Books You Need To Read In 2022; Building a Scalable ETL with SQL + Python; 7 Steps to Mastering SQL for Data Science; Top Data Science Projects to Build Your Skills.

This site is protected by reCAPTCHA and the Google Privacy Policy and Terms of Service apply.

How to Optimize the Developer Experience for Monumental Impact

Generative AI Deep Dive: Advancing from Proof of Concept to Production

Understanding User Needs and Satisfying Them

Beyond the Basics of A/B Tests: Highly Innovative Experimentation Tactics You Need to Know

Leading the Development of Profitable and Sustainable Products

Data Science Dojo

JULY 6, 2023

These tools provide data engineers with the necessary capabilities to efficiently extract, transform, and load (ETL) data, build data pipelines, and prepare data for analysis and consumption by other applications. dbt focuses on transforming raw data into analytics-ready tables using SQL-based transformations.

How to Optimize the Developer Experience for Monumental Impact

Generative AI Deep Dive: Advancing from Proof of Concept to Production

Understanding User Needs and Satisfying Them

Beyond the Basics of A/B Tests: Highly Innovative Experimentation Tactics You Need to Know

Leading the Development of Profitable and Sustainable Products

Pickl AI

JULY 25, 2023

They create data pipelines, ETL processes, and databases to facilitate smooth data flow and storage. With expertise in programming languages like Python , Java , SQL, and knowledge of big data technologies like Hadoop and Spark, data engineers optimize pipelines for data scientists and analysts to access valuable insights efficiently.

Mlearning.ai

APRIL 24, 2023

The Coursera class is direct to the point and gives concrete instructions about how to use the Azure Portal interface, Databricks, and the Python SDK; if you know nothing about Azure and need to use the service platform right away I highly recommend this course.

AWS Machine Learning Blog

APRIL 16, 2024

They then use SQL to explore, analyze, visualize, and integrate data from various sources before using it in their ML training and inference. Previously, data scientists often found themselves juggling multiple tools to support SQL in their workflow, which hindered productivity.

Pickl AI

APRIL 26, 2024

It covers essential topics such as SQL queries, data visualization, statistical analysis, machine learning concepts, and data manipulation techniques. Key Takeaways SQL Mastery: Understand SQL’s importance, join tables, and distinguish between SELECT and SELECT DISTINCT. How do you join tables in SQL?

phData

AUGUST 9, 2023

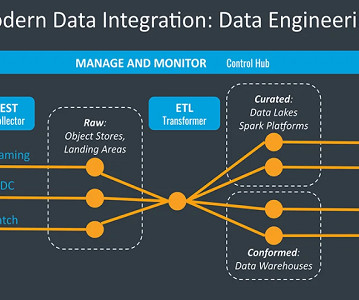

This is unlike the more traditional ETL method, where data is transformed before loading into the data warehouse. By bringing raw data into the data warehouse and then transforming it there, ELT provides more flexibility compared to ETL’s fixed pipelines. ETL systems just couldn’t handle the massive flows of raw data.

Data Science Dojo

MAY 10, 2023

Here, we outline the essential skills and qualifications that pave way for data science careers: Proficiency in Programming Languages – Mastery of programming languages such as Python, R, and SQL forms the foundation of a data scientist’s toolkit.

JUNE 26, 2023

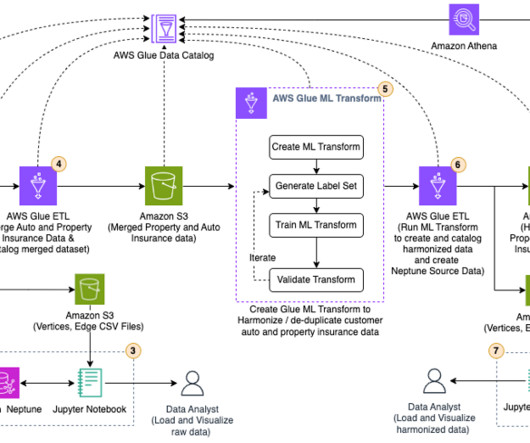

Transform raw insurance data into CSV format acceptable to Neptune Bulk Loader , using an AWS Glue extract, transform, and load (ETL) job. Run an AWS Glue ETL job to merge the raw property and auto insurance data into one dataset and catalog the merged dataset. We use Python scripts to analyze the data in a Jupyter notebook.

ODSC - Open Data Science

APRIL 3, 2023

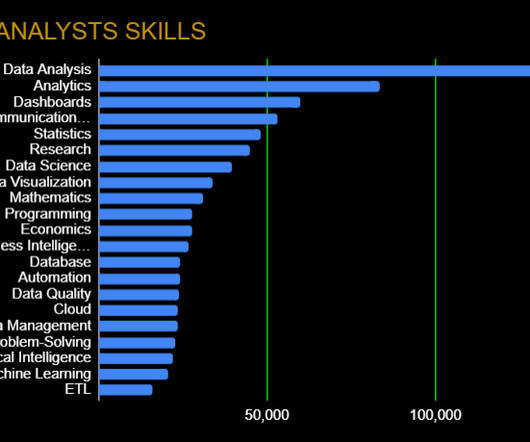

Data Wrangling: Data Quality, ETL, Databases, Big Data The modern data analyst is expected to be able to source and retrieve their own data for analysis. Competence in data quality, databases, and ETL (Extract, Transform, Load) are essential. SQL excels with big data and statistics, making it important in order to query databases.

Pickl AI

JULY 3, 2023

Here are steps you can follow to pursue a career as a BI Developer: Acquire a solid foundation in data and analytics: Start by building a strong understanding of data concepts, relational databases, SQL (Structured Query Language), and data modeling.

AWS Machine Learning Blog

DECEMBER 14, 2023

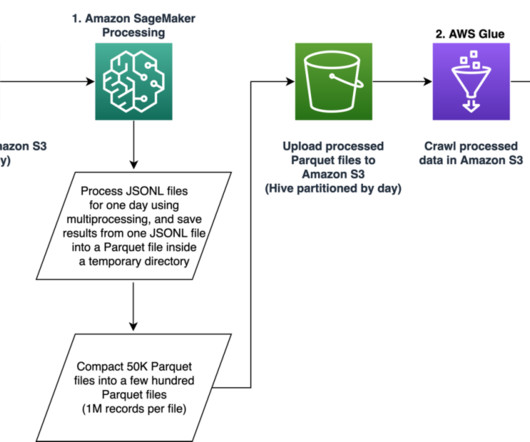

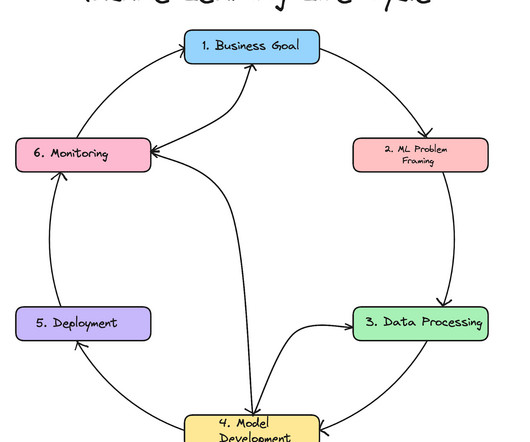

Our pipeline belongs to the general ETL (extract, transform, and load) process family that combines data from multiple sources into a large, central repository. The system includes feature engineering, deep learning model architecture design, hyperparameter optimization, and model evaluation, where all modules are run using Python.

Smart Data Collective

APRIL 29, 2020

Extraction, Transform, Load (ETL). Redshift is the product for data warehousing, and Athena provides SQL data analytics. It has useful features, such as an in-browser SQL editor for queries and data analysis, various data connectors for easy data ingestion, and automated data prepossessing and ingestion. Master data management.

ODSC - Open Data Science

JANUARY 18, 2024

Data scientists typically have strong skills in areas such as Python, R, statistics, machine learning, and data analysis. For example, if you’re a talented Python programmer, there may be other packages, libraries, and frameworks that you are familiar with. With that said, each skill may be used in a different manner.

Data Science Blog

SEPTEMBER 19, 2023

This brings reliability to data ETL (Extract, Transform, Load) processes, query performances, and other critical data operations. using for loops in Python). The following Terraform script will create an Azure Resource Group, a SQL Server, and a SQL Database. So why using IaC for Cloud Data Infrastructures?

Pickl AI

APRIL 6, 2023

Strong programming language skills in at least one of the languages like Python, Java, R, or Scala. Hands-on experience working with SQLDW and SQL-DB. Answer : Polybase helps optimize data ingestion into PDW and supports T-SQL. Sound knowledge of relational databases or NoSQL databases like Cassandra. What is Polybase?

ODSC - Open Data Science

APRIL 6, 2023

For budding data scientists and data analysts, there are mountains of information about why you should learn R over Python and the other way around. Though both are great to learn, what gets left out of the conversation is a simple yet powerful programming language that everyone in the data science world can agree on, SQL.

The MLOps Blog

MARCH 15, 2023

Enables users to trigger their custom transformations via SQL and dbt. Now that’s out of the way, let’s get to the details of each offer: Apache Airflow Overview It is one of the most popular open-source python-based data pipeline tools with high flexibility in creating workflows and tasks.

Pickl AI

NOVEMBER 14, 2023

Looking for an effective and handy Python code repository in the form of Importing Data in Python Cheat Sheet? Your journey ends here where you will learn the essential handy tips quickly and efficiently with proper explanations which will make any type of data importing journey into the Python platform super easy.

ODSC - Open Data Science

SEPTEMBER 29, 2023

Spark is more focused on data science, ingestion, and ETL, while HPCC Systems focuses on ETL and data delivery and governance. It’s not a widely known programming language like Java, Python, or SQL. ECL sounds compelling, but it is a new programming language and has fewer users than languages like Python or SQL.

Dataconomy

MARCH 24, 2023

BI developer: A BI developer is responsible for designing and implementing BI solutions, including data warehouses, ETL processes, and reports. Database management: A BI professional should be able to design and manage databases, including data modeling, ETL processes, and data integration.

Dataconomy

MARCH 24, 2023

BI developer: A BI developer is responsible for designing and implementing BI solutions, including data warehouses, ETL processes, and reports. Database management: A BI professional should be able to design and manage databases, including data modeling, ETL processes, and data integration.

phData

FEBRUARY 7, 2024

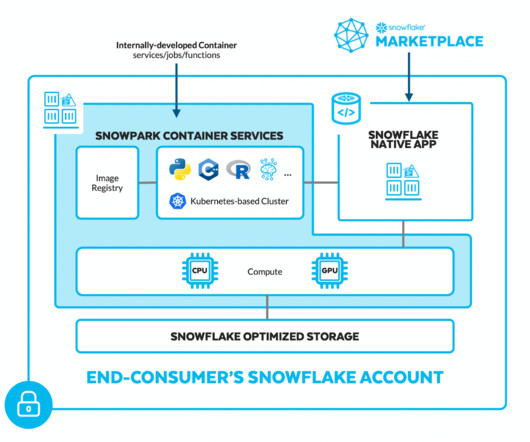

Snowpark is the set of libraries and runtimes in Snowflake that securely deploy and process non-SQL code, including Python, Java, and Scala. On the server side, runtimes include Python, Java, and Scala in the warehouse model or Snowpark Container Services (public preview). filter(col("id") == 1).select(col("name"),

Smart Data Collective

MAY 20, 2019

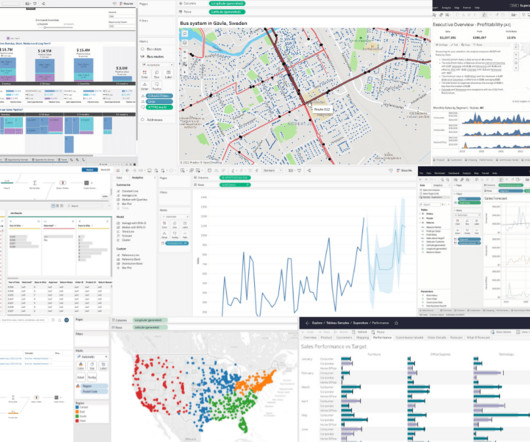

For frameworks and languages, there’s SAS, Python, R, Apache Hadoop and many others. The popular tools, on the other hand, include Power BI, ETL, IBM Db2, and Teradata. SQL programming skills, specific tool experience — Tableau for example — and problem-solving are just a handful of examples.

The MLOps Blog

JANUARY 23, 2023

More useful resources about DVC: Versioning data and models Data version control with Python and DVC DVCorg YouTube DVC data version control cheatsheet At this point, one question arises; why use DVC instead of Git? With lakeFS it is possible to test ETLs on top of production data, in isolation, without copying anything.

phData

FEBRUARY 14, 2023

Matillion Matillion is a complete ETL tool that integrates with an extensive list of pre-built data source connectors, loads data into cloud data environments such as Snowflake, and then performs transformations to make data consumable by analytics tools such as Tableau and PowerBI.

The MLOps Blog

SEPTEMBER 7, 2023

From writing code for doing exploratory analysis, experimentation code for modeling, ETLs for creating training datasets, Airflow (or similar) code to generate DAGs, REST APIs, streaming jobs, monitoring jobs, etc. Implementing these practices can enhance the efficiency and consistency of ETL workflows.

phData

AUGUST 17, 2023

They offer a range of features and integrations, so the choice depends on factors like the complexity of your data pipeline, requirements for connections to other services, user interface, and compatibility with any ETL software already in use. It also allows you to create custom operators to integrate with specific systems.

The MLOps Blog

JANUARY 26, 2024

Related article How to Build ETL Data Pipelines for ML See also MLOps and FTI pipelines testing Once you have built an ML system, you have to operate, maintain, and update it. All of them are written in Python. Python is the only prerequisite for the course, and your first ML system will consist of just three different Python scripts.

AWS Machine Learning Blog

JANUARY 17, 2024

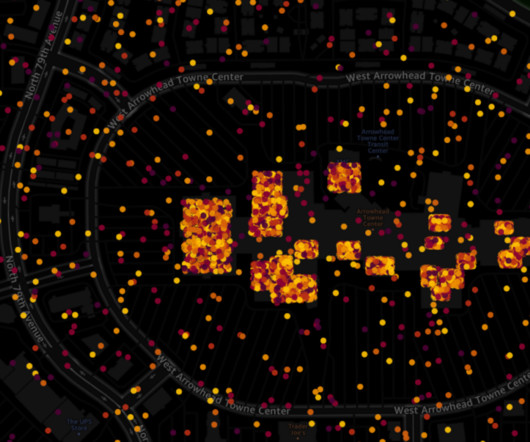

The GPU-powered interactive visualizer and Python notebooks provide a seamless way to explore millions of data points in a single window and share insights and results. As part of the initial ETL, this raw data can be loaded onto tables using AWS Glue. Sources and schema There are few sources of mobility data.

The MLOps Blog

OCTOBER 20, 2023

Example template for an exploratory notebook | Source: Author How to organize code in Jupyter notebook For exploratory tasks, the code to produce SQL queries, pandas data wrangling, or create plots is not important for readers. If a reviewer wants more detail, they can always look at the Python module directly. Redshift).

Alation

JANUARY 17, 2023

Reverse ETL tools. The modern data stack is also the consequence of a shift in analysis workflow, fromextract, transform, load (ETL) to extract, load, transform (ELT). A Note on the Shift from ETL to ELT. In the past, data movement was defined by ETL: extract, transform, and load. Extract, load, Transform (ELT) tools.

Applied Data Science

AUGUST 2, 2021

The most common data science languages are Python and R — SQL is also a must have skill for acquiring and manipulating data. They build production-ready systems using best-practice containerisation technologies, ETL tools and APIs. The Data Engineer Not everyone working on a data science project is a data scientist.

The MLOps Blog

AUGUST 3, 2023

At a high level, we are trying to make machine learning initiatives more human capital efficient by enabling teams to more easily get to production and maintain their model pipelines, ETLs, or workflows. You could almost think of Hamilton as DBT for Python functions. It gives a very opinionary way of writing Python. Stefan: Yep!

DagsHub

APRIL 7, 2024

Thanks to its various operators, it is integrated with Python, Spark, Bash, SQL, and more. Flexibility: Its use cases are wider than just machine learning; for example, we can use it to set up ETL pipelines. Programming language: It offers a simple way to transform Python code into an interactive workflow application.

Tableau

AUGUST 21, 2023

Hyper Supercharge your analytics with in-memory data engine Hyper is Tableau's blazingly fast SQL engine that lets you do fast real-time analytics, interactive exploration, and ETL transformations through Tableau Prep. An ODBC connector lets you access any data source that supports the SQL standard and implements the ODBC API.

IBM Journey to AI blog

JULY 17, 2023

The next generation of Db2 Warehouse SaaS and Netezza SaaS on AWS fully support open formats such as Parquet and Iceberg table format, enabling the seamless combination and sharing of data in watsonx.data without the need for duplication or additional ETL.

NOVEMBER 24, 2023

This use case highlights how large language models (LLMs) are able to become a translator between human languages (English, Spanish, Arabic, and more) and machine interpretable languages (Python, Java, Scala, SQL, and so on) along with sophisticated internal reasoning. Room for improvement!

Expert insights. Personalized for you.

We have resent the email to

Are you sure you want to cancel your subscriptions?

Let's personalize your content