Mistral’s New Model Crushes Benchmarks in 4+ Languages

Analytics Vidhya

APRIL 21, 2024

Mixtral 8x22B by Mistral AI Crushes Benchmarks in 4+ Languages The post Mistral’s New Model Crushes Benchmarks in 4+ Languages appeared first on Analytics Vidhya.

Analytics Vidhya

APRIL 21, 2024

Mixtral 8x22B by Mistral AI Crushes Benchmarks in 4+ Languages The post Mistral’s New Model Crushes Benchmarks in 4+ Languages appeared first on Analytics Vidhya.

Hacker News

APRIL 21, 2024

It’s a generally accepted belief that as you age, your resting metabolism slows — especially over age 40. Not true, says an August study published in the journal Science. Fitness expert Dana Santas shares four science-backed ways to boost your metabolism.

This site is protected by reCAPTCHA and the Google Privacy Policy and Terms of Service apply.

Analytics Vidhya

APRIL 21, 2024

Introduction Converting natural language queries into code is one of the toughest challenges in NLP. The ability to change a simple English question into a complex code opens up a number of possibilities in developer productivity and a quick software development lifecycle. This is where Google Gemma, an Open Source Large Language Model comes into […] The post Fine-tuning Google Gemma with Unsloth appeared first on Analytics Vidhya.

Hacker News

APRIL 21, 2024

A declaration signed by dozens of scientists says there is “a realistic possibility” for elements of consciousness in reptiles, insects and molluscs. A declaration signed by dozens of scientists says there is “a realistic possibility” for elements of consciousness in reptiles, insects and molluscs.

Advertisement

Generative AI is upending the way product developers & end-users alike are interacting with data. Despite the potential of AI, many are left with questions about the future of product development: How will AI impact my business and contribute to its success? What can product managers and developers expect in the future with the widespread adoption of AI?

Analytics Vidhya

APRIL 21, 2024

Introduction In today’s data-driven world, data science skills are more crucial than ever. But bridging the gap between textbook knowledge and real-world application is essential. Data science internships provide the perfect solution. These internships allow you to sharpen your skills by applying your programming prowess and analytical thinking to solve impactful problems.

Machine Learning Research at Apple

APRIL 21, 2024

Adaptive gradient methods, notably Adam, have become indispensable for optimizing neural networks, particularly in conjunction with Transformers. In this paper, we present a novel optimization anomaly called the Slingshot Effect, which manifests during extremely late stages of training. We identify a distinctive characteristic of this phenomenon through cyclic phase transitions between stable and unstable training regimes, as evidenced by the cyclic behavior of the norm of the last layer’s weigh

Data Science Current brings together the best content for data science professionals from the widest variety of thought leaders.

Hacker News

APRIL 21, 2024

I recently had the pleasure to interview Pedro David Garcia Lopez, a Ruby and Rails developer based in UK, who used to be a Lorry driver. What's interesting is that he decided to become a developer at the age of 38. This post shares his story and I hope you find it as inspiring as I did!

Towards AI

APRIL 21, 2024

Last Updated on April 21, 2024 by Editorial Team Author(s): Salvatore Raieli Originally published on Towards AI. In a deluge of information, research is becoming more and more isolated, and this is a problemPhoto by George Rosema on Unsplash Communication — the human connection — is the key to personal and career success. — Paul J. Meyer The need for connection and community is primal, as fundamental as the need for air, water, and food. — Dean Ornish The degree to which the ideas and artifacts

Hacker News

APRIL 21, 2024

It’s surprisingly easy to be generous and find solutions to our friend’s problems. Much easier than it is to do it for ourselves. Why? There are two useful reasons, I think. FIRST, because we’re unaware of all the real and imaginary boundaries our friends have set up. If it were easy to solve the problem, they probably would have. But they’re making it hard because they have decided that there are people or systems that aren’t worth challenging.

Towards AI

APRIL 21, 2024

Last Updated on April 21, 2024 by Editorial Team Author(s): Mélony Qin (aka cloudmelon) Originally published on Towards AI. As AI tools become increasingly popular, they play an important role in boosting our productivity in everyday tasks. However, bringing them into professional settings faces challenges because they need internet access, and some company policies simply do not allow them.

Advertisement

Many organizations today are unlocking the power of their data by using graph databases to feed downstream analytics, enahance visualizations, and more. Yet, when different graph nodes represent the same entity, graphs get messy. Watch this essential video with Senzing CEO Jeff Jonas on how adding entity resolution to a graph database condenses network graphs to improve analytics and save your analysts time.

Hacker News

APRIL 21, 2024

The social psychologist discusses the “great rewiring” of children’s brains, why social-media companies are to blame, and how to reverse course.

Towards AI

APRIL 21, 2024

Last Updated on April 21, 2024 by Editorial Team Author(s): Eera Bhatt Originally published on Towards AI. Especially since the COVID-19 epidemic, we have relied on the Internet for so many of our daily services like banking, entertainment, and social networking. Sadly, cyber attackers take advantage of this by trying out more phishing attacks on unsuspecting users.

Hacker News

APRIL 21, 2024

What can be done when one threatened animal kills another? Researchers at Washington University in St. Louis confronted this difficult reality when they witnessed attacks on critically endangered lemurs by another vulnerable species, a carnivore called a fosa.

Towards AI

APRIL 21, 2024

Last Updated on April 21, 2024 by Editorial Team Author(s): Youssef Hosni Originally published on Towards AI. A Comparison Between Chroma, Milvus, Faiss, and Weaviate Vector Databases Semantic search and retrieval-augmented generation (RAG) are revolutionizing the way we interact online. However, the backbone enabling these groundbreaking advancements is often overlooked: vector databases.

Advertisement

Start-ups & SMBs launching products quickly must bundle dashboards, reports, & self-service analytics into apps. Customers expect rapid value from your product (time-to-value), data security, and access to advanced capabilities. Traditional Business Intelligence (BI) tools can provide valuable data analysis capabilities, but they have a barrier to entry that can stop small and midsize businesses from capitalizing on them.

Hacker News

APRIL 21, 2024

Who wouldn't want to use the IS mechanism they've paid for to squeeze a bit more resolution our of their camera? People like Richard Butler, who question the effort/reward balance they offer.

Smart Data Collective

APRIL 21, 2024

A new startups has released some major breakthroughs in blockchain technology by launching the Reactive Network.

Hacker News

APRIL 21, 2024

Drone footage captures the astonishing moment hundreds of emperor penguin chicks jump off a 50-foot cliff in Antarctica for the first time.

DagsHub

APRIL 21, 2024

If you are a Marvel fan, you might know that killing Thanos does not guarantee a safer world as there are other villains to deal with. Source: [link] Similarly, while building any machine learning-based product or service, training and evaluating the model on a few real-world samples does not necessarily mean the end of your responsibilities. You need to make that model available to the end users, monitor it, and retrain it for better performance if needed.

Speaker: Scott Sehlhorst

We know we want to create products which our customers find to be valuable. Whether we label it as customer-centric or product-led depends on how long we've been doing product management. There are three challenges we face when doing this. The obvious challenge is figuring out what our users need; the non-obvious challenges are in creating a shared understanding of those needs and in sensing if what we're doing is meeting those needs.

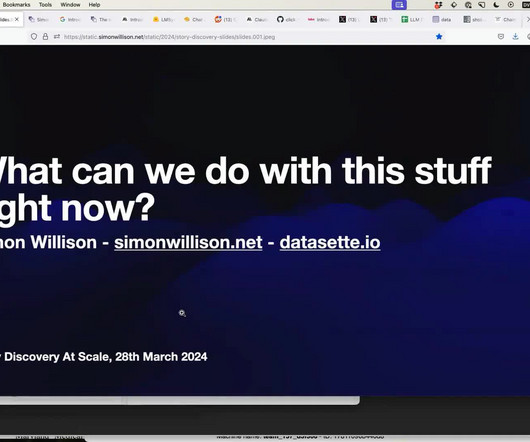

Hacker News

APRIL 21, 2024

I gave a talk last month at the Story Discovery at Scale data journalism conference hosted at Stanford by Big Local News.

Towards AI

APRIL 21, 2024

Last Updated on April 21, 2024 by Editorial Team Author(s): Saankhya Mondal Originally published on Towards AI. Dealing with tabular data? Pandas is the first Python library you’ll come across when dealing with tabular data preprocessing. It’s one of the most popular libraries used in Data Science. Pandas is the go-to framework for working with small or medium-sized CSV files.

Hacker News

APRIL 21, 2024

Climate change is not just about global warming but also about varying effects depending on location, including extreme heat and potential changes in ocean currents.

Hacker News

APRIL 21, 2024

We're living through a quiet global catastrophe of soulless boring buildings. Join the campaign, launched by Heatherwick Studio, to make cities more human.

Advertisement

While data platforms, artificial intelligence (AI), machine learning (ML), and programming platforms have evolved to leverage big data and streaming data, the front-end user experience has not kept up. Holding onto old BI technology while everything else moves forward is holding back organizations. Traditional Business Intelligence (BI) aren’t built for modern data platforms and don’t work on modern architectures.

Hacker News

APRIL 21, 2024

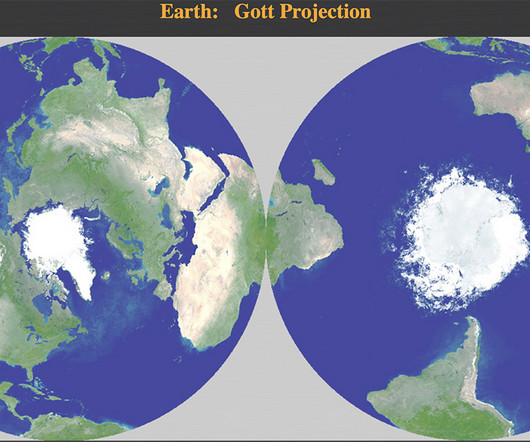

The webpage provides a dynamic interface to our new map projection. You can click anywhere on the map to center on different locations. You can also spin the map.

Hacker News

APRIL 21, 2024

With little competition at the goverment level, Windows giant has no incentive to make its systems safer

Hacker News

APRIL 21, 2024

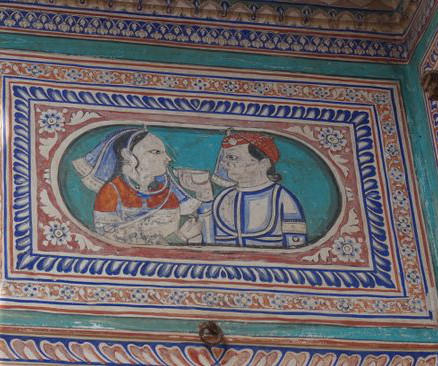

Maharani Mahansar Heritage Liquor is the modern manifestation of a nine-generation family tradition that has spanned monarchy, colonization, and independence.

Hacker News

APRIL 21, 2024

A recent study found the pesticide chlormequat, which has been linked to fertility issues in animals, in oat-based foods sold in the U.S. Should you be concerned? Experts share what to know.

Speaker: Timothy Chan, PhD., Head of Data Science

Are you ready to move beyond the basics and take a deep dive into the cutting-edge techniques that are reshaping the landscape of experimentation? 🌐 From Sequential Testing to Multi-Armed Bandits, Switchback Experiments to Stratified Sampling, Timothy Chan, Data Science Lead, is here to unravel the mysteries of these powerful methodologies that are revolutionizing how we approach testing.

Hacker News

APRIL 21, 2024

Environmental factors may have a more significant impact on certain cognitive abilities than genetics.

Hacker News

APRIL 21, 2024

Stay informed on the real-time status of the US Electric Grid with comprehensive monitoring and data.

Hacker News

APRIL 21, 2024

One group of capuchins uses stone tools, but neighbouring groups do not – suggesting primates - including us - might enter the Stone Age simply by chance

Hacker News

APRIL 21, 2024

Many decentralized identity protocols are being developed, which claim to increase users’ privacy, enable interoperability and convenient.

Advertisement

In the rapidly-evolving world of embedded analytics and business intelligence, one important question has emerged at the forefront: How can you leverage artificial intelligence (AI) to enhance your application’s analytics capabilities? Imagine having an AI tool that answers your user’s questions with a deep understanding of the context in their business and applications, nuances of their industry, and unique challenges they face.

Let's personalize your content