Research Highlights: Scaling MLPs: A Tale of Inductive Bias

insideBIGDATA

JULY 5, 2023

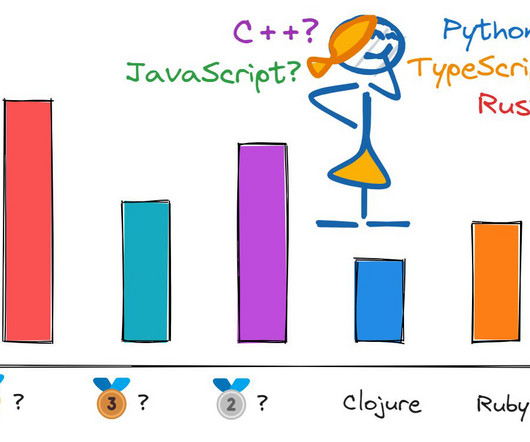

Multi-layer Perceptrons (MLPs) are the most fundamental type of neural network, so they play an important role in many machine learning systems and are the most theoretically studied type of neural network. A new paper from researchers at ETH Zurich pushes the limits of pure MLPs, and shows that scaling them up allows much better performance than expected from MLPs in the past.

Let's personalize your content