Discover the Groundbreaking LLM Development of Mixtral 8x7B

Analytics Vidhya

JANUARY 14, 2024

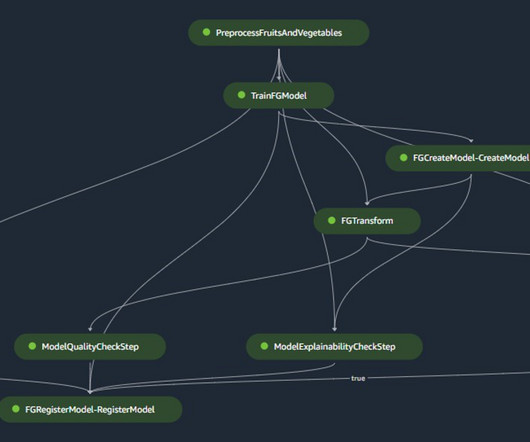

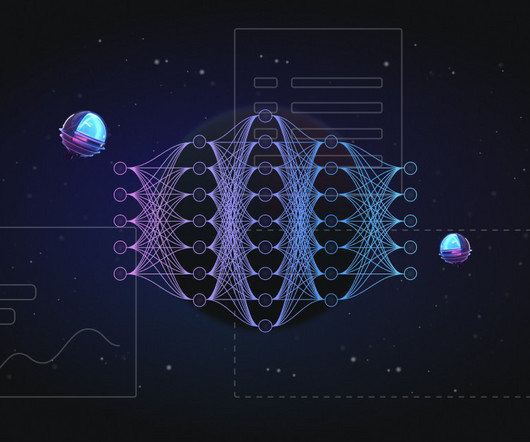

Released just a month ago, this model sparked excitement by introducing a novel architectural paradigm, the “Mixture of Experts” (MoE) approach. Introduction The ever-evolving landscape of language model development saw the release of a groundbreaking paper – the Mixtral 8x7B paper.

Let's personalize your content