How I Redesigned over 100 ETL into ELT Data Pipelines

KDnuggets

NOVEMBER 15, 2021

Learn how to level up your Data Pipelines!

This site uses cookies to improve your experience. By viewing our content, you are accepting the use of cookies. To help us insure we adhere to various privacy regulations, please select your country/region of residence. If you do not select a country we will assume you are from the United States. View our privacy policy and terms of use.

KDnuggets

NOVEMBER 15, 2021

Learn how to level up your Data Pipelines!

KDnuggets

NOVEMBER 15, 2021

Learn how to level up your Data Pipelines!

This site is protected by reCAPTCHA and the Google Privacy Policy and Terms of Service apply.

The Key to Sustainable Energy Optimization: A Data-Driven Approach for Manufacturing

From Developer Experience to Product Experience: How a Shared Focus Fuels Product Success

Understanding User Needs and Satisfying Them

Beyond the Basics of A/B Tests: Highly Innovative Experimentation Tactics You Need to Know

Data Science Dojo

JULY 6, 2023

Data engineering tools are software applications or frameworks specifically designed to facilitate the process of managing, processing, and transforming large volumes of data. Airflow: Apache Airflow is an open-source platform for orchestrating and scheduling data pipelines.

The Key to Sustainable Energy Optimization: A Data-Driven Approach for Manufacturing

From Developer Experience to Product Experience: How a Shared Focus Fuels Product Success

Understanding User Needs and Satisfying Them

Beyond the Basics of A/B Tests: Highly Innovative Experimentation Tactics You Need to Know

phData

JULY 12, 2023

Unlike traditional methods that rely on complex SQL queries for orchestration, Matillion Jobs provides a more streamlined approach. By converting SQL scripts into Matillion Jobs , users can take advantage of the platform’s advanced features for job orchestration, scheduling, and sharing. What is Matillion ETL?

phData

JUNE 14, 2023

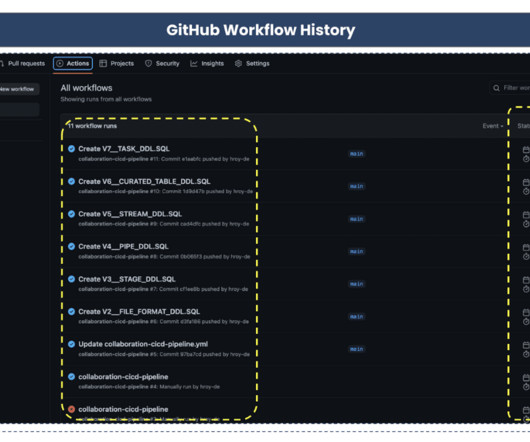

In recent years, data engineering teams working with the Snowflake Data Cloud platform have embraced the continuous integration/continuous delivery (CI/CD) software development process to develop data products and manage ETL/ELT workloads more efficiently.

phData

APRIL 21, 2023

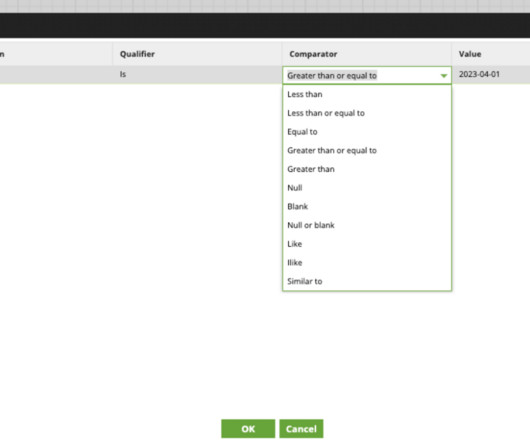

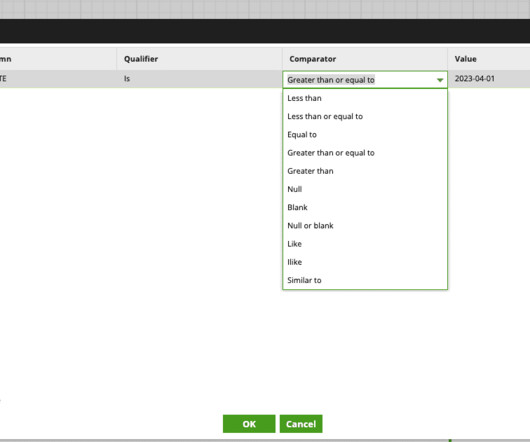

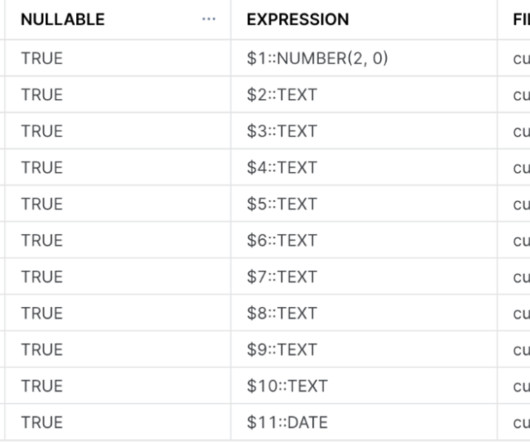

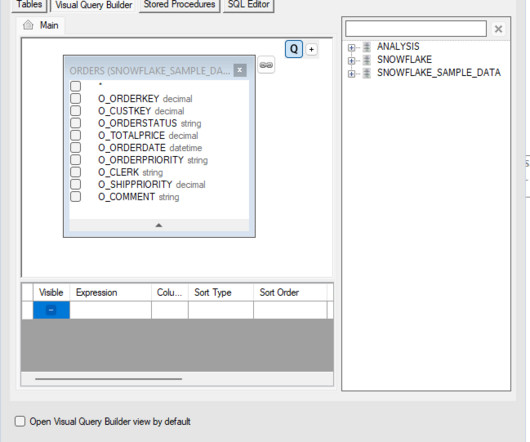

Unlike traditional methods that rely on complex SQL queries for orchestration, Matillion Jobs provide a more streamlined approach. By converting SQL scripts into Matillion Jobs , users can take advantage of the platform’s advanced features for job orchestration, scheduling, and sharing. In our case, this table is “orders.”

Analytics Vidhya

FEBRUARY 20, 2023

Introduction Azure data factory (ADF) is a cloud-based data ingestion and ETL (Extract, Transform, Load) tool. The data-driven workflow in ADF orchestrates and automates data movement and data transformation.

phData

APRIL 29, 2024

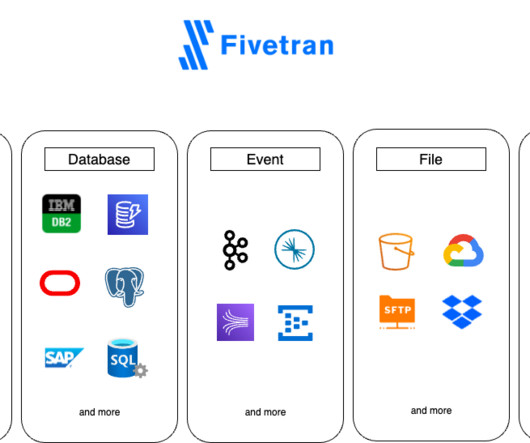

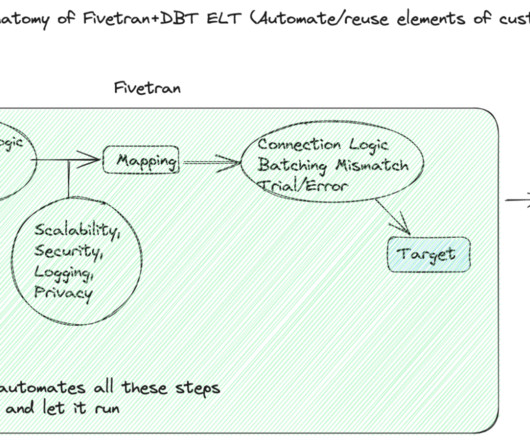

Understanding Fivetran Fivetran is a popular Software-as-a-Service platform that enables users to automate the movement of data and ETL processes across diverse sources to a target destination. For a longer overview, along with insights and best practices, please feel free to jump back to the previous blog.

Pickl AI

JULY 25, 2023

Data engineers are essential professionals responsible for designing, constructing, and maintaining an organization’s data infrastructure. They create data pipelines, ETL processes, and databases to facilitate smooth data flow and storage. Data Visualization: Matplotlib, Seaborn, Tableau, etc.

The MLOps Blog

MARCH 15, 2023

In this post, you will learn about the 10 best data pipeline tools, their pros, cons, and pricing. A typical data pipeline involves the following steps or processes through which the data passes before being consumed by a downstream process, such as an ML model training process.

IBM Journey to AI blog

MAY 15, 2024

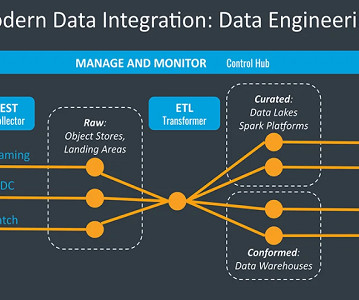

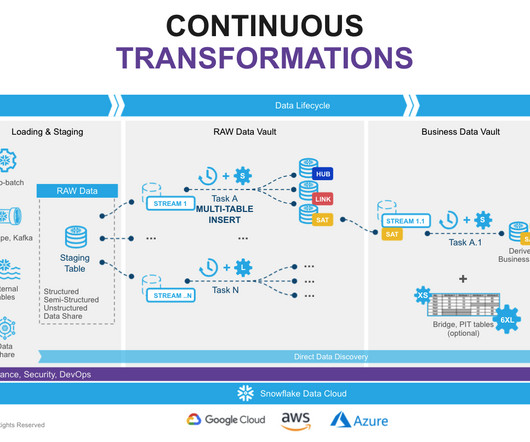

Two of the more popular methods, extract, transform, load (ETL ) and extract, load, transform (ELT) , are both highly performant and scalable. Data engineers build data pipelines, which are called data integration tasks or jobs, as incremental steps to perform data operations and orchestrate these data pipelines in an overall workflow.

ODSC - Open Data Science

JANUARY 18, 2024

This individual is responsible for building and maintaining the infrastructure that stores and processes data; the kinds of data can be diverse, but most commonly it will be structured and unstructured data. They’ll also work with software engineers to ensure that the data infrastructure is scalable and reliable.

Pickl AI

JULY 3, 2023

Here are steps you can follow to pursue a career as a BI Developer: Acquire a solid foundation in data and analytics: Start by building a strong understanding of data concepts, relational databases, SQL (Structured Query Language), and data modeling.

phData

MARCH 1, 2024

There’s no need for developers or analysts to manually adjust table schemas or modify ETL (Extract, Transform, Load) processes whenever the source data structure changes. Time Efficiency – The automated schema detection and evolution features contribute to faster data availability.

phData

MARCH 14, 2024

In the data analytics processes, choosing the right tools is crucial for ensuring efficiency and scalability. Two popular players in this area are Alteryx Designer and Matillion ETL , both offering strong solutions for handling data workflows with Snowflake Data Cloud integration.

Alation

JUNE 14, 2023

You don’t have to write ETL jobs.” That lowers the barrier to entry because you don’t have to be an ETL developer. Data Pipeline Capabilities This team’s scope is massive because the data pipelines are huge and there are many different capabilities embedded in them. Invest in automation.

Smart Data Collective

SEPTEMBER 8, 2021

The ETL process is defined as the movement of data from its source to destination storage (typically a Data Warehouse) for future use in reports and analyzes. The data is initially extracted from a vast array of sources before transforming and converting it to a specific format based on business requirements.

ODSC - Open Data Science

SEPTEMBER 29, 2023

It truly is an all-in-one data lake solution. HPCC Systems and Spark also differ in that they work with distinct parts of the big data pipeline. Spark is more focused on data science, ingestion, and ETL, while HPCC Systems focuses on ETL and data delivery and governance. Tell me more about ECL.

The MLOps Blog

JANUARY 23, 2023

Dolt LakeFS Delta Lake Pachyderm Git-like versioning Database tool Data lake Data pipelines Experiment tracking Integration with cloud platforms Integrations with ML tools Examples of data version control tools in ML DVC Data Version Control DVC is a version control system for data and machine learning teams.

IBM Journey to AI blog

JANUARY 5, 2023

The right data architecture can help your organization improve data quality because it provides the framework that determines how data is collected, transported, stored, secured, used and shared for business intelligence and data science use cases. What does a modern data architecture do for your business?

phData

AUGUST 17, 2023

Data pipeline orchestration tools are designed to automate and manage the execution of data pipelines. These tools help streamline and schedule data movement and processing tasks, ensuring efficient and reliable data flow. What are Orchestration Tools?

The MLOps Blog

SEPTEMBER 7, 2023

Data Scientists and ML Engineers typically write lots and lots of code. From writing code for doing exploratory analysis, experimentation code for modeling, ETLs for creating training datasets, Airflow (or similar) code to generate DAGs, REST APIs, streaming jobs, monitoring jobs, etc. Related post MLOps Is an Extension of DevOps.

phData

JULY 17, 2023

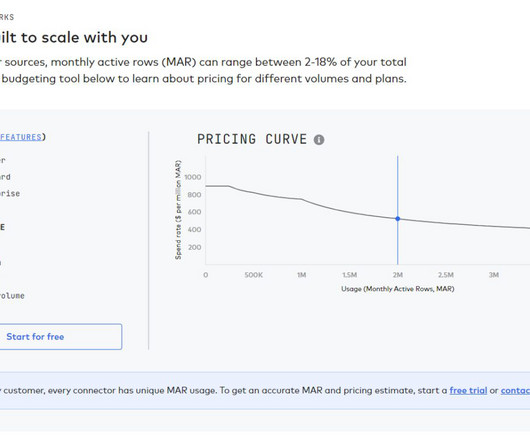

In order to fully leverage this vast quantity of collected data, companies need a robust and scalable data infrastructure to manage it. This is where Fivetran and the Modern Data Stack come in. This complexity often requires many hours of work from a large data engineering team to build and manually manage data pipelines.

phData

FEBRUARY 14, 2023

Source data formats can only be Parquer, JSON, or Delimited Text (CSV, TSV, etc.). Streamsets Data Collector StreamSets Data Collector Engine is an easy-to-use data pipeline engine for streaming, CDC, and batch ingestion from any source to any destination.

phData

AUGUST 10, 2023

That said, dbt provides the ability to generate data vault models and also allows you to write your data transformations using SQL and code-reusable macros powered by Jinja2 to run your data pipelines in a clean and efficient way. The most important reason for using DBT in Data Vault 2.0

phData

OCTOBER 20, 2023

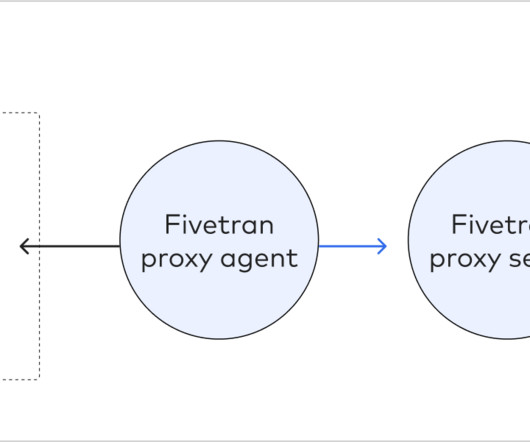

Some of the databases supported by Fivetran are: Snowflake Data Cloud (BETA) MySQL PostgreSQL SAP ERP SQL Server Oracle In this blog, we will review how to pull Data from on-premise Systems using Fivetran to a specific target or destination. Databases often write more information to a transaction log than is required.

phData

FEBRUARY 5, 2024

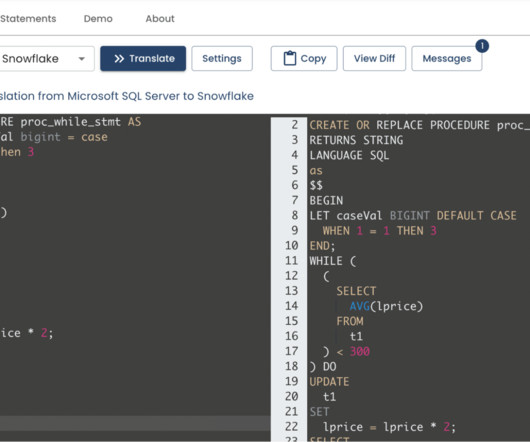

The tool converts the templated configuration into a set of SQL commands that are executed against the target Snowflake environment. Replicate can interact with a wide variety of databases, data warehouses, and data lakes (on-premise or based in the cloud). Essentially, it functions like Google Translate — but for SQL dialects.

phData

FEBRUARY 7, 2024

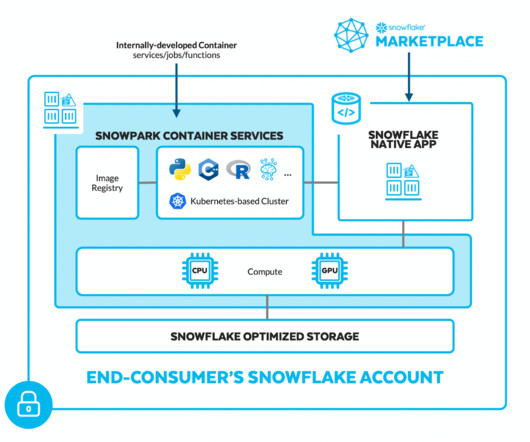

Snowpark is the set of libraries and runtimes in Snowflake that securely deploy and process non-SQL code, including Python, Java, and Scala. A DataFrame is like a query that must be evaluated to retrieve data. An action causes the DataFrame to be evaluated and sends the corresponding SQL statement to the server for execution.

Alation

JANUARY 17, 2023

It is known to have benefits in handling data due to its robustness, speed, and scalability. A typical modern data stack consists of the following: A data warehouse. Data ingestion/integration services. Reverse ETL tools. Data orchestration tools. A Note on the Shift from ETL to ELT.

Applied Data Science

AUGUST 2, 2021

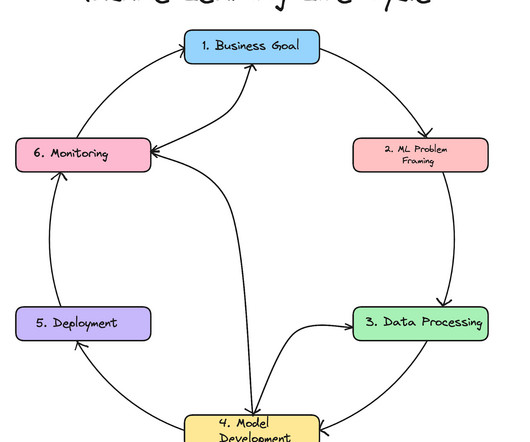

Automation Automating data pipelines and models ➡️ 6. The most common data science languages are Python and R — SQL is also a must have skill for acquiring and manipulating data. The Data Engineer Not everyone working on a data science project is a data scientist.

phData

MARCH 8, 2023

It allows organizations to easily connect their disparate data sources without having to manage any infrastructure. Fivetran’s automated data movement platform simplifies the ETL (extract, transform, load) process by automating most of the time-consuming tasks of ETL that data engineers would typically do.

Alation

APRIL 4, 2023

However, the race to the cloud has also created challenges for data users everywhere, including: Cloud migration is expensive, migrating sensitive data is risky, and navigating between on-prem sources is often confusing for users. This empowers users to judge data’s quality and fitness for purpose quickly.

The MLOps Blog

JANUARY 26, 2024

Reference table for which technologies to use for your FTI pipelines for each ML system. Related article How to Build ETL Data Pipelines for ML See also MLOps and FTI pipelines testing Once you have built an ML system, you have to operate, maintain, and update it.

DagsHub

APRIL 7, 2024

Image generated with Midjourney In today’s fast-paced world of data science, building impactful machine learning models relies on much more than selecting the best algorithm for the job. Data scientists and machine learning engineers need to collaborate to make sure that together with the model, they develop robust data pipelines.

IBM Journey to AI blog

JULY 17, 2023

The next generation of Db2 Warehouse SaaS and Netezza SaaS on AWS fully support open formats such as Parquet and Iceberg table format, enabling the seamless combination and sharing of data in watsonx.data without the need for duplication or additional ETL.

phData

OCTOBER 17, 2023

The story is all too common – a business user requests some data, the data team creates/prioritizes a ticket, and said ticket is completed after some number of months (or weeks if you’re lucky) – just to have the data be wrong, and the whole process starts again. Those are scary for data teams to change.

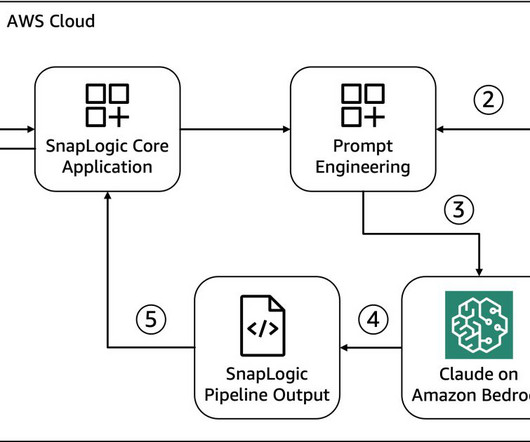

NOVEMBER 24, 2023

This use case highlights how large language models (LLMs) are able to become a translator between human languages (English, Spanish, Arabic, and more) and machine interpretable languages (Python, Java, Scala, SQL, and so on) along with sophisticated internal reasoning. Room for improvement!

IBM Journey to AI blog

AUGUST 4, 2023

Data fabric Data fabric architectures are designed to connect data platforms with the applications where users interact with information for simplified data access in an organization and self-service data consumption. Then, it applies these insights to automate and orchestrate the data lifecycle.

phData

JULY 18, 2023

Slow Response to New Information: Legacy data systems often lack the computation power necessary to run efficiently and can be cost-inefficient to scale. This typically results in long-running ETL pipelines that cause decisions to be made on stale or old data.

Expert insights. Personalized for you.

We have resent the email to

Are you sure you want to cancel your subscriptions?

Let's personalize your content