Testing and Monitoring Data Pipelines: Part Two

Dataversity

JUNE 19, 2023

In part one of this article, we discussed how data testing can specifically test a data object (e.g., table, column, metadata) at one particular point in the data pipeline.

This site uses cookies to improve your experience. By viewing our content, you are accepting the use of cookies. To help us insure we adhere to various privacy regulations, please select your country/region of residence. If you do not select a country we will assume you are from the United States. View our privacy policy and terms of use.

Dataversity

JUNE 19, 2023

In part one of this article, we discussed how data testing can specifically test a data object (e.g., table, column, metadata) at one particular point in the data pipeline.

Data Science Dojo

JULY 6, 2023

Data engineering tools are software applications or frameworks specifically designed to facilitate the process of managing, processing, and transforming large volumes of data. Spark offers a rich set of libraries for data processing, machine learning, graph processing, and stream processing.

This site is protected by reCAPTCHA and the Google Privacy Policy and Terms of Service apply.

Peak Performance: Continuous Testing & Evaluation of LLM-Based Applications

Manufacturing Sustainability Surge: Your Guide to Data-Driven Energy Optimization & Decarbonization

From Developer Experience to Product Experience: How a Shared Focus Fuels Product Success

Understanding User Needs and Satisfying Them

Beyond the Basics of A/B Tests: Highly Innovative Experimentation Tactics You Need to Know

Data Science Connect

JANUARY 27, 2023

Data engineering is a crucial field that plays a vital role in the data pipeline of any organization. It is the process of collecting, storing, managing, and analyzing large amounts of data, and data engineers are responsible for designing and implementing the systems and infrastructure that make this possible.

Peak Performance: Continuous Testing & Evaluation of LLM-Based Applications

Manufacturing Sustainability Surge: Your Guide to Data-Driven Energy Optimization & Decarbonization

From Developer Experience to Product Experience: How a Shared Focus Fuels Product Success

Understanding User Needs and Satisfying Them

Beyond the Basics of A/B Tests: Highly Innovative Experimentation Tactics You Need to Know

The MLOps Blog

MARCH 15, 2023

If you will ask data professionals about what is the most challenging part of their day to day work, you will likely discover their concerns around managing different aspects of data before they get to graduate to the data modeling stage. This ensures that the data is accurate, consistent, and reliable.

Pickl AI

JULY 25, 2023

Data engineers are essential professionals responsible for designing, constructing, and maintaining an organization’s data infrastructure. They create data pipelines, ETL processes, and databases to facilitate smooth data flow and storage. Big Data Processing: Apache Hadoop, Apache Spark, etc.

ODSC - Open Data Science

OCTOBER 15, 2023

Elementl / Dagster Labs Elementl and Dagster Labs are both companies that provide platforms for building and managing data pipelines. Elementl’s platform is designed for data engineers, while Dagster Labs’ platform is designed for data scientists. However, there are some critical differences between the two companies.

Iguazio

FEBRUARY 20, 2024

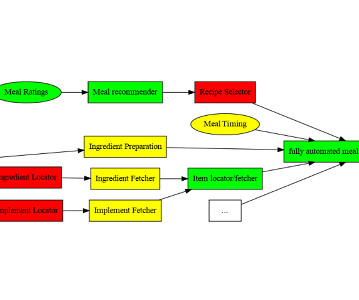

This includes management vision and strategy, resource commitment, data and tech and operating model alignment, robust risk management and change management. The required architecture includes a data pipeline, ML pipeline, application pipeline and a multi-stage pipeline. Read more here.

DagsHub

SEPTEMBER 10, 2023

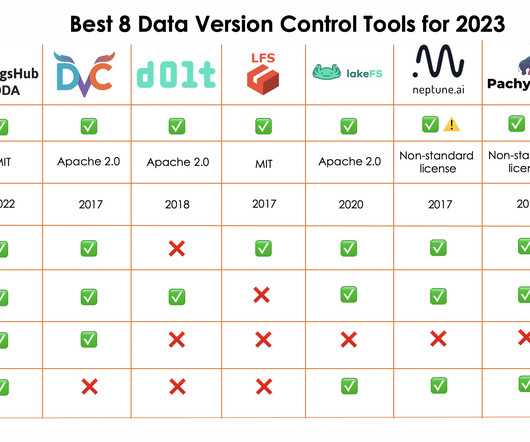

DagsHub is a centralized platform to host and manage machine learning projects, including code, data, models, experiments, annotations, model registry, and more! Intermediate Data Pipeline : Build data pipelines using DVC for automation and versioning of Open Source Machine Learning projects.

Pickl AI

JULY 3, 2023

It is the process of converting raw data into relevant and practical knowledge to help evaluate the performance of businesses, discover trends, and make well-informed choices. Data gathering, data integration, data modelling, analysis of information, and data visualization are all part of intelligence for businesses.

Alation

AUGUST 3, 2021

It brings together business users, data scientists , data analysts, IT, and application developers to fulfill the business need for insights. DataOps then works to continuously improve and adjust data models, visualizations, reports, and dashboards to achieve business goals. The Agile Connection.

IBM Journey to AI blog

SEPTEMBER 19, 2023

By analyzing datasets, data scientists can better understand their potential use in an algorithm or machine learning model. The data science lifecycle Data science is iterative, meaning data scientists form hypotheses and experiment to see if a desired outcome can be achieved using available data.

Tableau

OCTOBER 8, 2021

Leveraging Looker’s semantic layer will provide Tableau customers with trusted, governed data at every stage of their analytics journey. With its LookML modeling language, Looker provides a unique, modern approach to define governed and reusable data models to build a trusted foundation for analytics.

IBM Journey to AI blog

JANUARY 5, 2023

What does a modern data architecture do for your business? A modern data architecture like Data Mesh and Data Fabric aims to easily connect new data sources and accelerate development of use case specific data pipelines across on-premises, hybrid and multicloud environments.

IBM Journey to AI blog

OCTOBER 16, 2023

It includes processes that trace and document the origin of data, models and associated metadata and pipelines for audits. A data store lets a business connect existing data with new data and discover new insights with real-time analytics and business intelligence.

Tableau

OCTOBER 8, 2021

Leveraging Looker’s semantic layer will provide Tableau customers with trusted, governed data at every stage of their analytics journey. With its LookML modeling language, Looker provides a unique, modern approach to define governed and reusable data models to build a trusted foundation for analytics.

phData

AUGUST 10, 2023

That said, dbt provides the ability to generate data vault models and also allows you to write your data transformations using SQL and code-reusable macros powered by Jinja2 to run your data pipelines in a clean and efficient way. The most important reason for using DBT in Data Vault 2.0

Mlearning.ai

FEBRUARY 16, 2023

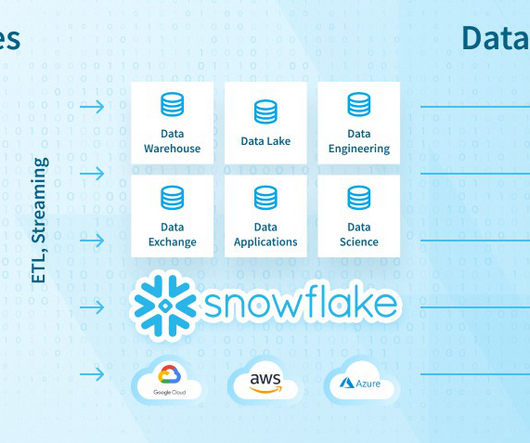

Thus, the solution allows for scaling data workloads independently from one another and seamlessly handling data warehousing, data lakes , data sharing, and engineering. Therefore, you’ll be empowered to truncate and reprocess data if bugs are detected and provide an excellent raw data source for data scientists.

Smart Data Collective

APRIL 2, 2019

Because of the types of databases that made their way into adoption during the nascent days of Big Data, we now have the problem of streamlining databases running on Hive or NoSQL that were never meant to process data sets as large as what our data lake holds. The Third Problem – Preparation of Data.

phData

NOVEMBER 2, 2023

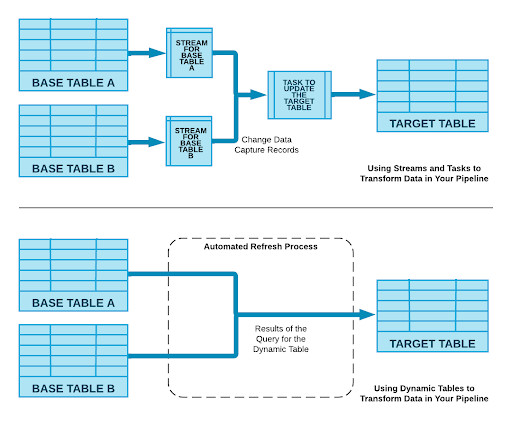

Managing data pipelines efficiently is paramount for any organization. The Snowflake Data Cloud has introduced a groundbreaking feature that promises to simplify and supercharge this process: Snowflake Dynamic Tables. What are Snowflake Dynamic Tables?

phData

JULY 17, 2023

In order to fully leverage this vast quantity of collected data, companies need a robust and scalable data infrastructure to manage it. This is where Fivetran and the Modern Data Stack come in. Snowflake Data Cloud Replication Transferring data from a source system to a cloud data warehouse.

phData

FEBRUARY 8, 2024

Data Modeling, dbt has gradually emerged as a powerful tool that largely simplifies the process of building and handling data pipelines. dbt is an open-source command-line tool that allows data engineers to transform, test, and document the data into one single hub which follows the best practices of software engineering.

ODSC - Open Data Science

APRIL 26, 2023

Data Engineer Data engineers are the authors of the infrastructure that stores, processes, and manages the large volumes of data an organization has. The main aspect of their profession is the building and maintenance of data pipelines, which allow for data to move between sources.

phData

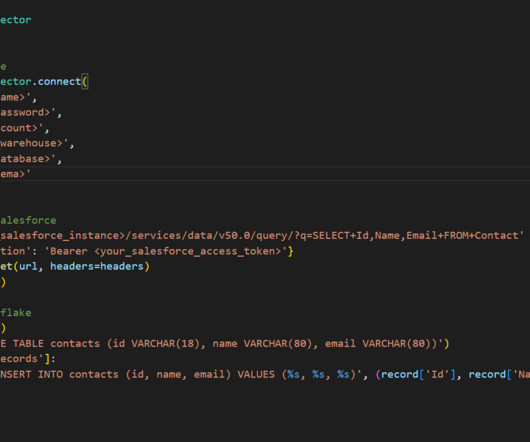

SEPTEMBER 13, 2023

Third-Party Tools Third-party tools like Matillion or Fivetran can help streamline the process of ingesting Salesforce data into Snowflake. With these tools, businesses can quickly set up data pipelines that automatically extract data from Salesforce and load it into Snowflake.

Dataversity

SEPTEMBER 28, 2022

Data engineering is a fascinating and fulfilling career – you are at the helm of every business operation that requires data, and as long as users generate data, businesses will always need data engineers. The journey to becoming a successful data engineer […]. In other words, job security is guaranteed.

Iguazio

JANUARY 22, 2024

Production App - Build resilient and modular production pipelines with automation, scale, testing, observability, versioning, security, risk handling, etc. Monitoring - Monitor all resources, data, model and application metrics to ensure performance. This helps cleanse the data.

Snorkel AI

MAY 12, 2023

The reason is that most teams do not have access to a robust data ecosystem for ML development. billion is lost by Fortune 500 companies because of broken data pipelines and communications. Publishing standards for data and governance of that data is either missing or very widely far from an ideal.

Snorkel AI

MAY 12, 2023

The reason is that most teams do not have access to a robust data ecosystem for ML development. billion is lost by Fortune 500 companies because of broken data pipelines and communications. Publishing standards for data and governance of that data is either missing or very widely far from an ideal.

phData

MAY 25, 2023

Model Your Data Appropriately Once you have chosen the method to connect to your data (Import, DirectQuery, Composite), you will need to make sure that you create an efficient and optimized data model. Here are some of our best practices for building data models in Power BI to optimize your Snowflake experience: 1.

Iguazio

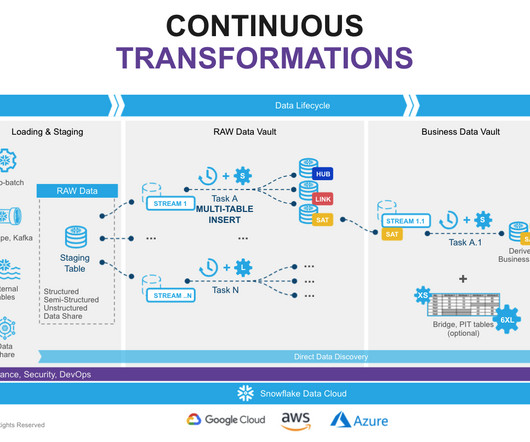

FEBRUARY 8, 2024

Data Pipeline - Manages and processes various data sources. ML Pipeline - Focuses on training, validation and deployment. Application Pipeline - Manages requests and data/model validations. Multi-Stage Pipeline - Ensures correct model behavior and incorporates feedback loops.

The MLOps Blog

JUNE 27, 2023

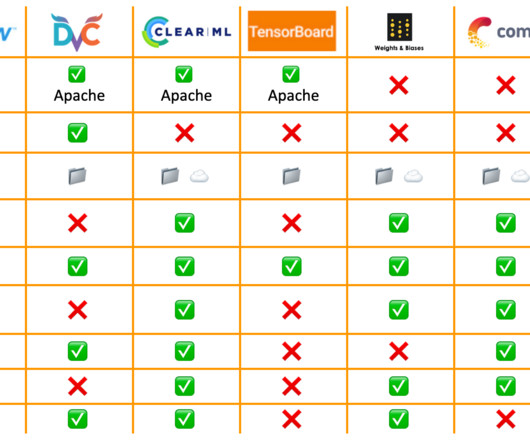

Model versioning, lineage, and packaging : Can you version and reproduce models and experiments? Can you see the complete model lineage with data/models/experiments used downstream? It could help you detect and prevent data pipeline failures, data drift, and anomalies.

Tableau

DECEMBER 7, 2022

Every company today is being asked to do more with less, and leaders need access to fresh, trusted KPIs and data-driven insights to manage their businesses, keep ahead of the competition, and provide unparalleled customer experiences. . But good data—and actionable insights—are hard to get. The power of the customer graph keeps going.

Tableau

DECEMBER 7, 2022

Every company today is being asked to do more with less, and leaders need access to fresh, trusted KPIs and data-driven insights to manage their businesses, keep ahead of the competition, and provide unparalleled customer experiences. . But good data—and actionable insights—are hard to get. The power of the customer graph keeps going.

DataRobot Blog

SEPTEMBER 13, 2022

Companies at this stage will likely have a team of ML engineers dedicated to creating data pipelines, versioning data, and maintaining operations monitoring data, models & deployments. By now, data scientists have witnessed success optimizing internal operations and external offerings through AI.

DagsHub

DECEMBER 11, 2023

DagsHub DagsHub is a centralized Github-based platform that allows Machine Learning and Data Science teams to build, manage and collaborate on their projects. In addition to versioning code, teams can also version data, models, experiments and more. It does not support the ‘dvc repro’ command to reproduce its data pipeline.

ODSC - Open Data Science

FEBRUARY 8, 2024

How can we build up toward our vision in terms of solvable data problems and specific data products? data sources or simpler data models) of the data products we want to build? What are we working towards? What are the dependencies (e.g. How can we resolve those dependencies step-by-step?

ODSC - Open Data Science

MAY 10, 2023

Many mistakenly equate tabular data with business intelligence rather than AI, leading to a dismissive attitude toward its sophistication. Standard data science practices could also be contributing to this issue. One might say that tabular data modeling is the original data-centric AI!

Tableau

DECEMBER 7, 2022

Every company today is being asked to do more with less, and leaders need access to fresh, trusted KPIs and data-driven insights to manage their businesses, keep ahead of the competition, and provide unparalleled customer experiences. But good data—and actionable insights—are hard to get. What is Salesforce Data Cloud for Tableau?

Iguazio

DECEMBER 18, 2023

This will require investing resources in the entire AI and ML lifecycle, including building the data pipeline, scaling, automation, integrations, addressing risk and data privacy, and more. By doing so, you can ensure quality and production-ready models.

DagsHub

DECEMBER 5, 2023

The Git integration means that experiments are automatically reproducible and linked to their code, data, pipelines, and models. With DVC, we don't need to rebuild previous models or data modeling techniques to achieve the same past state of results.

Iguazio

DECEMBER 18, 2023

This will require investing resources in the entire AI and ML lifecycle, including building the data pipeline, scaling, automation, integrations, addressing risk and data privacy, and more. By doing so, you can ensure quality and production-ready models.

DagsHub

APRIL 21, 2024

It is specially designed for monitoring highly dynamic containerized environments such as Kubernetes and provides powerful features for collecting, querying, visualizing, and alerting on time-series data. Apache Airflow Apache Airflow is an open-source workflow orchestration tool that can manage complex workflows and data pipelines.

Mlearning.ai

JUNE 16, 2023

Generative AI can be used to automate the data modeling process by generating entity-relationship diagrams or other types of data models and assist in UI design process by generating wireframes or high-fidelity mockups. GPT-4 Data Pipelines: Transform JSON to SQL Schema Instantly Blockstream’s public Bitcoin API.

The MLOps Blog

DECEMBER 28, 2022

Team composition The team comprises data pipeline engineers, ML engineers, full-stack engineers, and data scientists. Organization Acquia Industry Software-as-a-service Team size Acquia built an ML team five years ago in 2017 and has a team size of 6.

The MLOps Blog

MAY 9, 2023

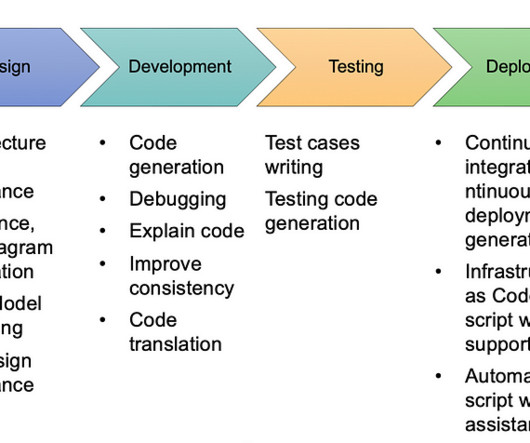

A typical machine learning pipeline with various stages highlighted | Source: Author Common types of machine learning pipelines In line with the stages of the ML workflow (data, model, and production), an ML pipeline comprises three different pipelines that solve different workflow stages.

Expert insights. Personalized for you.

We have resent the email to

Are you sure you want to cancel your subscriptions?

Let's personalize your content