ETL vs ELT: Which One is Right for Your Data Pipeline?

KDnuggets

MARCH 31, 2023

Learn about the differences between ETL and ELT data integration techniques and determine which is right for your data pipeline.

This site uses cookies to improve your experience. By viewing our content, you are accepting the use of cookies. To help us insure we adhere to various privacy regulations, please select your country/region of residence. If you do not select a country we will assume you are from the United States. View our privacy policy and terms of use.

KDnuggets

MARCH 31, 2023

Learn about the differences between ETL and ELT data integration techniques and determine which is right for your data pipeline.

Analytics Vidhya

JULY 20, 2022

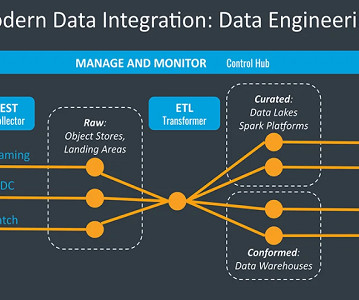

Introduction Data acclimates to countless shapes and sizes to complete its journey from a source to a destination. Be it a streaming job or a batch job, ETL and ELT are irreplaceable. Before designing an ETL job, choosing optimal, performant, and cost-efficient tools […].

This site is protected by reCAPTCHA and the Google Privacy Policy and Terms of Service apply.

How to Optimize the Developer Experience for Monumental Impact

Generative AI Deep Dive: Advancing from Proof of Concept to Production

Understanding User Needs and Satisfying Them

Beyond the Basics of A/B Tests: Highly Innovative Experimentation Tactics You Need to Know

Leading the Development of Profitable and Sustainable Products

Data Science Dojo

JULY 6, 2023

Data engineering tools are software applications or frameworks specifically designed to facilitate the process of managing, processing, and transforming large volumes of data. Essential data engineering tools for 2023 Top 10 data engineering tools to watch out for in 2023 1.

How to Optimize the Developer Experience for Monumental Impact

Generative AI Deep Dive: Advancing from Proof of Concept to Production

Understanding User Needs and Satisfying Them

Beyond the Basics of A/B Tests: Highly Innovative Experimentation Tactics You Need to Know

Leading the Development of Profitable and Sustainable Products

Towards AI

MARCH 21, 2023

Navigating the World of Data Engineering: A Beginner’s Guide. A GLIMPSE OF DATA ENGINEERING ❤ IMAGE SOURCE: BY AUTHOR Data or data? No matter how you read or pronounce it, data always tells you a story directly or indirectly. Data engineering can be interpreted as learning the moral of the story.

ODSC - Open Data Science

JANUARY 18, 2024

Data engineering is a rapidly growing field, and there is a high demand for skilled data engineers. If you are a data scientist, you may be wondering if you can transition into data engineering. In this blog post, we will discuss how you can become a data engineer if you are a data scientist.

Pickl AI

JULY 25, 2023

Unfolding the difference between data engineer, data scientist, and data analyst. Data engineers are essential professionals responsible for designing, constructing, and maintaining an organization’s data infrastructure. Read more to know.

phData

FEBRUARY 23, 2023

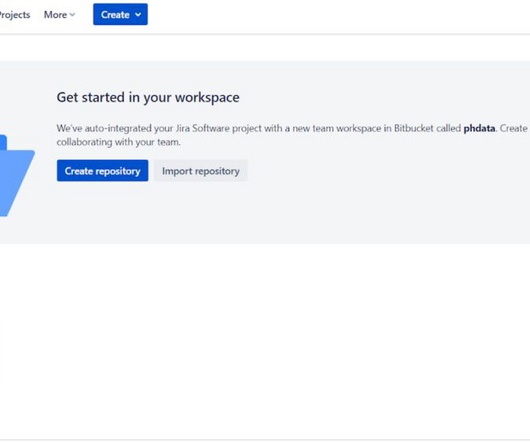

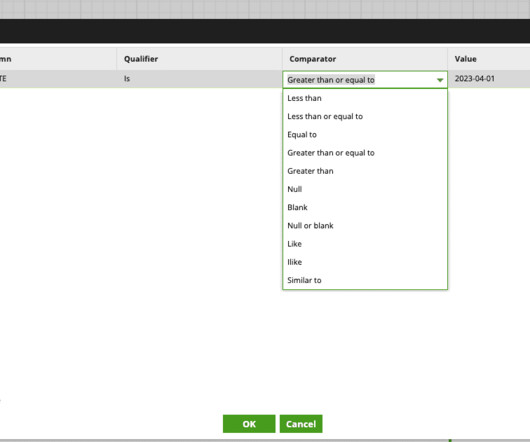

Matillion has a Git integration for Matillion ETL with Git repository providers, which can be used by your company to leverage your development across teams and establish a more reliable environment. What is Matillion ETL? To start, we’ll use the URL of your new BitBucket repository to point to the Matillion ETL platform later.

phData

JUNE 14, 2023

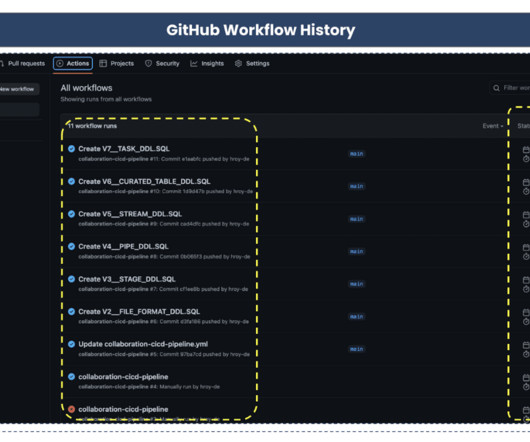

In recent years, data engineering teams working with the Snowflake Data Cloud platform have embraced the continuous integration/continuous delivery (CI/CD) software development process to develop data products and manage ETL/ELT workloads more efficiently. What Are the Benefits of CI/CD Pipeline For Snowflake?

Analytics Vidhya

FEBRUARY 20, 2023

Introduction Azure data factory (ADF) is a cloud-based data ingestion and ETL (Extract, Transform, Load) tool. The data-driven workflow in ADF orchestrates and automates data movement and data transformation.

IBM Journey to AI blog

MAY 15, 2024

Two of the more popular methods, extract, transform, load (ETL ) and extract, load, transform (ELT) , are both highly performant and scalable. Data engineers build data pipelines, which are called data integration tasks or jobs, as incremental steps to perform data operations and orchestrate these data pipelines in an overall workflow.

The MLOps Blog

MAY 17, 2023

However, efficient use of ETL pipelines in ML can help make their life much easier. This article explores the importance of ETL pipelines in machine learning, a hands-on example of building ETL pipelines with a popular tool, and suggests the best ways for data engineers to enhance and sustain their pipelines.

phData

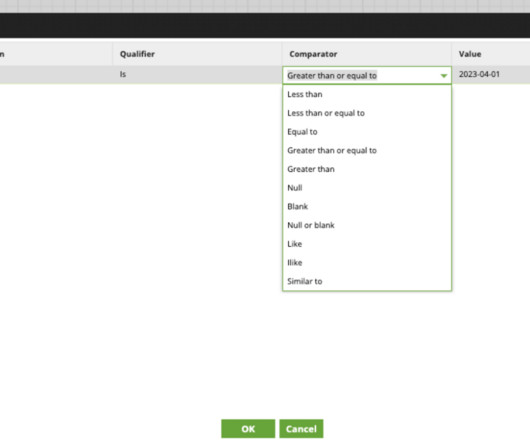

MARCH 1, 2024

There’s no need for developers or analysts to manually adjust table schemas or modify ETL (Extract, Transform, Load) processes whenever the source data structure changes. Time Efficiency – The automated schema detection and evolution features contribute to faster data availability.

phData

NOVEMBER 28, 2023

In July 2023, Matillion launched their fully SaaS platform called Data Productivity Cloud, aiming to create a future-ready, everyone-ready, and AI-ready environment that companies can easily adopt and start automating their data pipelines coding, low-coding, or even no-coding at all. Why Does it Matter?

ODSC - Open Data Science

APRIL 4, 2024

Find out how to weave data reliability and quality checks into the execution of your data pipelines and more. More Speakers and Sessions Announced for the 2024 Data Engineering Summit Ranging from experimentation platforms to enhanced ETL models and more, here are some more sessions coming to the 2024 Data Engineering Summit.

IBM Journey to AI blog

APRIL 20, 2023

Read this e-book on building strong governance foundations Why automated data lineage is crucial for success Data lineage , the process of tracking the flow of data over time from origin to destination within a data pipeline, is essential to understand the full lifecycle of data and ensure regulatory compliance.

phData

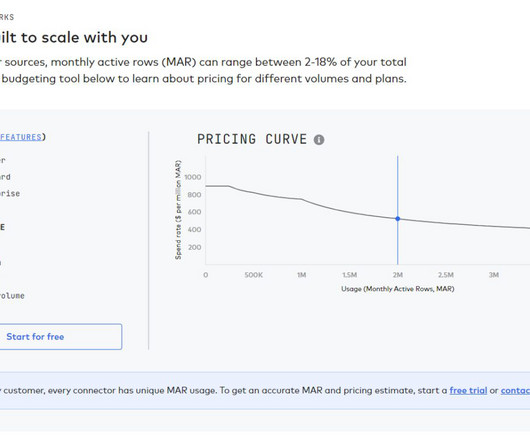

DECEMBER 14, 2023

phData is the right partner for Fivetran because of our deep expertise in data engineering, data warehousing, and the modern data stack. We also deeply understand the modern data stack, so we can help you choose the right tools and technologies for your needs. Is LDP a good ETL tool?

phData

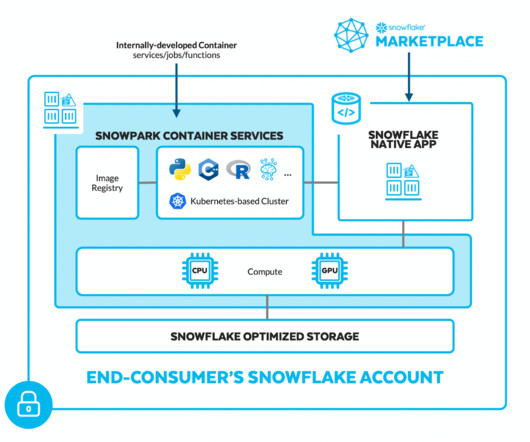

AUGUST 17, 2023

In the rapidly evolving landscape of data engineering, Snowflake Data Cloud has emerged as a leading cloud-based data warehousing solution, providing powerful capabilities for storing, processing, and analyzing vast amounts of data. What are Orchestration Tools?

Dataconomy

FEBRUARY 23, 2023

Data engineers play a crucial role in managing and processing big data. They are responsible for designing, building, and maintaining the infrastructure and tools needed to manage and process large volumes of data effectively. What is data engineering?

Alation

MARCH 22, 2022

As the latest iteration in this pursuit of high-quality data sharing, DataOps combines a range of disciplines. It synthesizes all we’ve learned about agile, data quality , and ETL/ELT. And it injects mature process control techniques from the world of traditional engineering. Take a look at figure 1 below.

Alation

MAY 16, 2023

But with the sheer amount of data continually increasing, how can a business make sense of it? Robust data pipelines. What is a Data Pipeline? A data pipeline is a series of processing steps that move data from its source to its destination. The answer?

Alation

JUNE 14, 2023

We didn’t have access to hundreds of data engineers out in the marketplace,” Lavorini points out. You don’t have to write ETL jobs.” That lowers the barrier to entry because you don’t have to be an ETL developer. Fifth Third leverages dbt for “embedded” governance. Invest in automation.

phData

JULY 12, 2023

In this blog, we’ll explore how Matillion Jobs can simplify the data transformation process by allowing users to visualize the data flow of a job from start to finish. What is Matillion ETL? Whether you’re new to Matillion or just looking to improve your ETL skills, keep reading to learn more!

phData

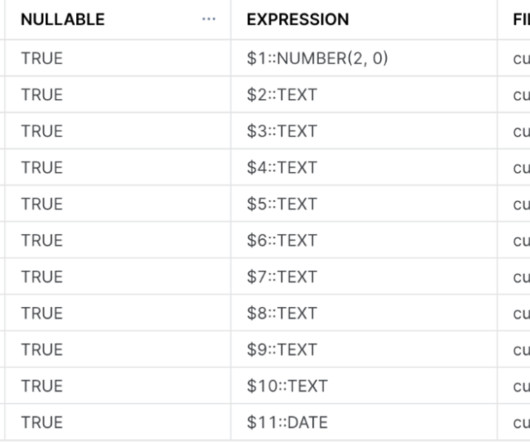

APRIL 21, 2023

In this blog, we’ll explore how Matillion Jobs can simplify the data transformation process by allowing users to visualize the data flow of a job from start to finish. With that, let’s dive in What is Matillion ETL? Read Components These are the components that define the source of data that is to be transformed.

The MLOps Blog

MARCH 15, 2023

Our activities mostly revolved around: 1 Identifying data sources 2 Collecting & Integrating data 3 Developing Analytical/ML models 4 Integrating the above into a cloud environment 5 Leveraging the cloud to automate the above processes 6 Making the deployment robust & scalable Who was involved in the project?

IBM Journey to AI blog

JANUARY 5, 2023

The first generation of data architectures represented by enterprise data warehouse and business intelligence platforms were characterized by thousands of ETL jobs, tables, and reports that only a small group of specialized data engineers understood, resulting in an under-realized positive impact on the business.

Alation

MAY 16, 2023

But with the sheer amount of data continually increasing, how can a business make sense of it? Robust data pipelines. What is a Data Pipeline? A data pipeline is a series of processing steps that move data from its source to its destination. The answer?

The MLOps Blog

JANUARY 23, 2023

However, there are some key differences that we need to consider: Size and complexity of the data In machine learning, we are often working with much larger data. Basically, every machine learning project needs data. Given the range of tools and data types, a separate data versioning logic will be necessary.

Kaggle

JULY 29, 2020

In August 2019, Data Works was acquired and Dave worked to ensure a successful transition. David: My technical background is in ETL, data extraction, data engineering and data analytics. What preprocessing and feature engineering did you do? David, what can you tell us about your background?

Data Science Dojo

MARCH 15, 2023

It is designed to assist data engineers in transforming, converting, and validating data in a simplified manner while ensuring accuracy and reliability. The Meltano CLI can efficiently handle complex data engineering tasks, providing a user-friendly interface that simplifies the ELT process.

The MLOps Blog

SEPTEMBER 7, 2023

Data Scientists and ML Engineers typically write lots and lots of code. From writing code for doing exploratory analysis, experimentation code for modeling, ETLs for creating training datasets, Airflow (or similar) code to generate DAGs, REST APIs, streaming jobs, monitoring jobs, etc.

phData

JULY 17, 2023

This is where Fivetran and the Modern Data Stack come in. Fivetran is a fully-automated, zero-maintenance data pipeline tool that automates the ETL process from data sources to your cloud warehouse. Because of this, it was hard for them to leverage their data and make data-driven decisions.

Data Science Dojo

FEBRUARY 20, 2023

we have Databricks which is an open-source, next-generation data management platform. It focuses on two aspects of data management: ETL (extract-transform-load) and data lifecycle management. It provides a variety of tools for data engineering, including model training and deployment.

phData

FEBRUARY 14, 2023

Source data formats can only be Parquer, JSON, or Delimited Text (CSV, TSV, etc.). Streamsets Data Collector StreamSets Data Collector Engine is an easy-to-use data pipeline engine for streaming, CDC, and batch ingestion from any source to any destination. The biggest reason is the ease of use.

Applied Data Science

AUGUST 2, 2021

Automation Automating data pipelines and models ➡️ 6. Team Building the right data science team is complex. With a range of role types available, how do you find the perfect balance of Data Scientists , Data Engineers and Data Analysts to include in your team? Big Ideas What to look out for in 2022 1.

phData

MARCH 8, 2023

It allows organizations to easily connect their disparate data sources without having to manage any infrastructure. Fivetran’s automated data movement platform simplifies the ETL (extract, transform, load) process by automating most of the time-consuming tasks of ETL that data engineers would typically do.

Smart Data Collective

OCTOBER 17, 2022

Engineering teams, in particular, can quickly get overwhelmed by the abundance of information pertaining to competition data, new product and service releases, market developments, and industry trends, resulting in information anxiety. Explosive data growth can be too much to handle. Can’t get to the data.

Dataconomy

FEBRUARY 12, 2024

The acronym ETL—Extract, Transform, Load—has long been the linchpin of modern data management, orchestrating the movement and manipulation of data across systems and databases. This methodology has been pivotal in data warehousing, setting the stage for analysis and informed decision-making.

Alation

JANUARY 17, 2023

It is known to have benefits in handling data due to its robustness, speed, and scalability. A typical modern data stack consists of the following: A data warehouse. Data ingestion/integration services. Reverse ETL tools. Data orchestration tools. A Note on the Shift from ETL to ELT. Data scientists.

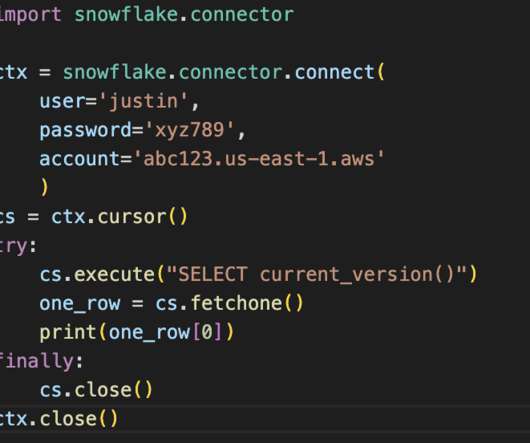

phData

JANUARY 5, 2023

Python is the top programming language used by data engineers in almost every industry. Python has proven proficient in setting up pipelines, maintaining data flows, and transforming data with its simple syntax and proficiency in automation. Truly a must-have tool in your data engineering arsenal!

phData

FEBRUARY 7, 2024

Snowpark Use Cases Data Science Streamlining data preparation and pre-processing: Snowpark’s Python, Java, and Scala libraries allow data scientists to use familiar tools for wrangling and cleaning data directly within Snowflake, eliminating the need for separate ETL pipelines and reducing context switching.

Alation

APRIL 4, 2023

However, the race to the cloud has also created challenges for data users everywhere, including: Cloud migration is expensive, migrating sensitive data is risky, and navigating between on-prem sources is often confusing for users. To build effective data pipelines, they need context (or metadata) on every source.

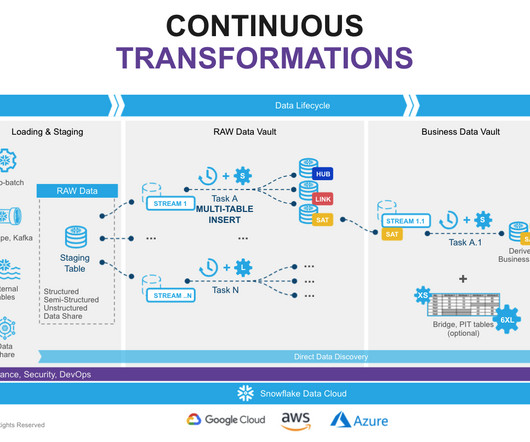

phData

AUGUST 10, 2023

That said, dbt provides the ability to generate data vault models and also allows you to write your data transformations using SQL and code-reusable macros powered by Jinja2 to run your data pipelines in a clean and efficient way. The most important reason for using DBT in Data Vault 2.0

Data Science Blog

FEBRUARY 4, 2023

If the data sources are additionally expanded to include the machines of production and logistics, much more in-depth analyses for error detection and prevention as well as for optimizing the factory in its dynamic environment become possible.

The MLOps Blog

DECEMBER 7, 2022

May be useful Best Workflow and Pipeline Orchestration Tools: Machine Learning Guide Phase 1—Data pipeline: getting the house in order Once the dust was settled, we got the Architecture Canvas completed, and the plan was clear to everyone involved, the next step was to take a closer look at the architecture. What’s in the box?

Expert insights. Personalized for you.

We have resent the email to

Are you sure you want to cancel your subscriptions?

Let's personalize your content